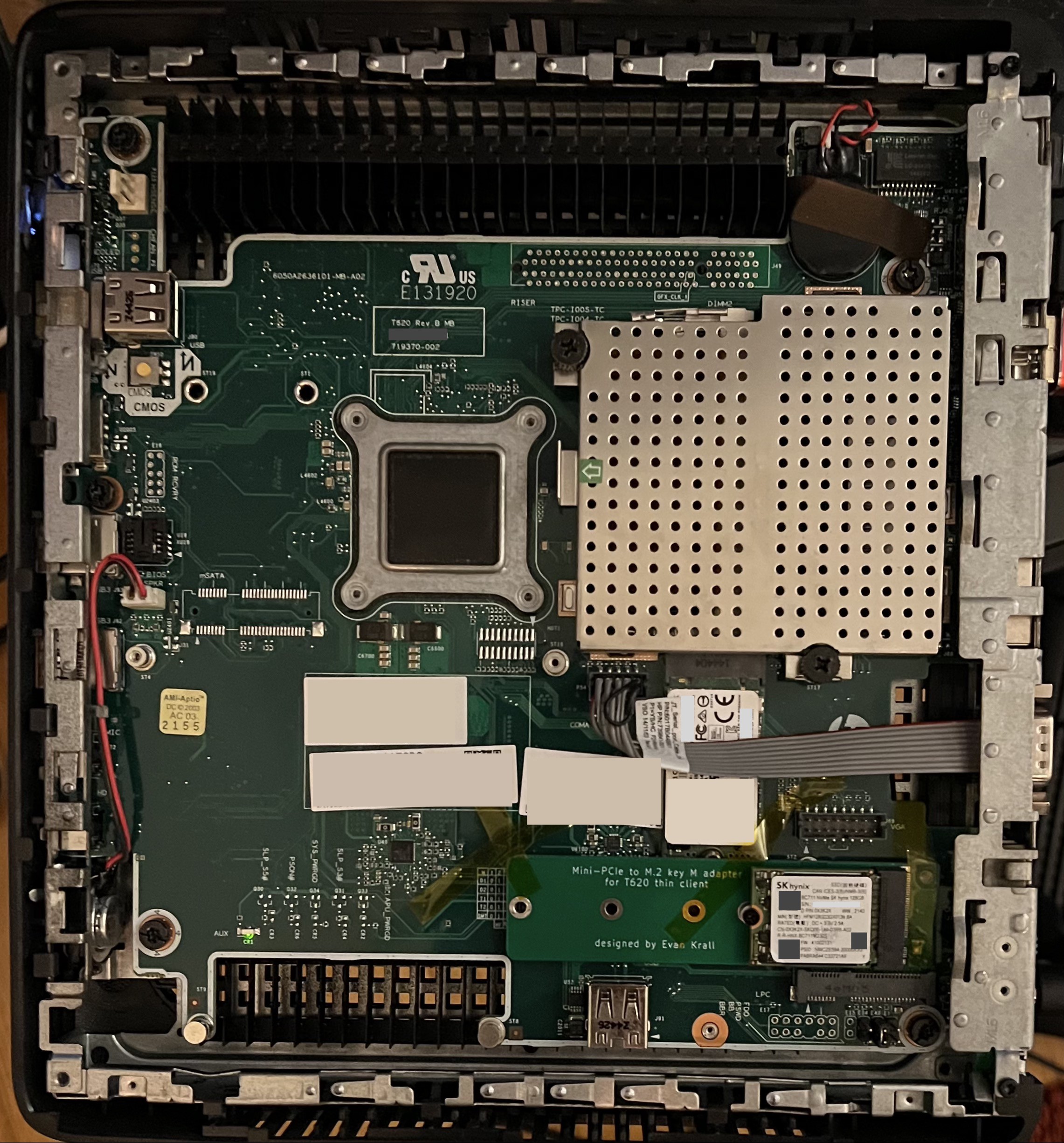

I've installed my adapter board, with a cheap SK Hynix 128G SSD, into my T620. Since I don't have any M1.6 screws, I've temporarily secured it with some kapton tape.

It works!

I've installed Ubuntu on it (using the SATA SSD that came with the T620 as the /boot drive. I am assuming that the BIOS doesn't know how to boot NVMe drives, but I haven't tested it.)

Benchmarks

I've used fio to benchmark the drive, per these instructions. All tests are done with 8 threads, queue depth 64.

| Test | Results |

| Write throughput (1M writes) | 303 MB/s |

| Write IOPS (4k writes) | 12019.74 IOPS |

| Read throughput (1M reads) | 454 MB/s |

| Read IOPS (4k reads) | 38080 IOPS |

The read throughput seems to have nearly saturated the PCIe connection: the mPCIe only has one PCIe v2 lane, which has a theoretical max speed of 500MB/s.

Observations

I noticed that with the NVMe drive in place, Ubuntu names my network interface enp2s0, whereas without the NVMe drive, the interface is called enp1s0. This means if you take an existing install and add an NVMe drive to it with this adapter, your network configuration might break. I was able to fix this by editing /etc/netplan/00-installer-config.yaml and adding an entry for enp2s0 underneath the entry for enp1s0:

$ cat /etc/netplan/00-installer-config.yaml

# This is the network config written by 'subiquity'

network:

ethernets:

enp1s0:

dhcp4: true

enp2s0:

dhcp4: true

version: 2

Evan

Evan

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.