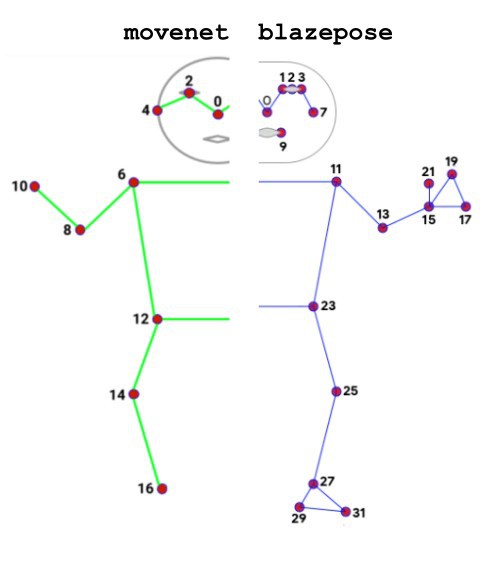

The movenet pose estimation model, that was used for lalelu_drums so far, has the drawback that it provides keypoints for the wrists and for the ankles but no more details on the pose of the hands or the feet. In contrast, the blazepose pose estimation model provides additional keypoints for the pinky knuckle, index knuckle and thumb knuckle as well as for the heel and the foot.

While there is a pretrained tensorflow model for movenet available from kaggle, for blazepose there is only a tensorflow.js model. Therefor, blazepose could not be used for lalelu_drums so far, since a tensorflow model is required as input for the TensorRT conversion (see log entry #01).

Fortunately, I now found a way to convert the blazepose tensorflow.js model to a tensorflow model and compile it for fast inference using TensorRT. The conversion could be done using the tfjs-to-tf converter by Patrick Levin.

In the following video you can see the keypoints related to the feet, tracked with 100 fps (green dots). An average of the knee, ankle and heel keypoints for each leg is computed and visualized in the puredata patch ('right_y_plot' and 'left_y_plot'). This value is used to create trigger events, that are visualized by bang items in puredata and eventually trigger the rimclick and basedrum sounds that can be heard.

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.