The side project highrate_longexp is finished, so that there is now a synchronized dual camera available that can be used for lalelu_drums. The benefit of using two cameras is that for a given frame rate (100 Hz) a long exposure time (20 ms) can be realized, reducing the illumination requirements. The two cameras are running at 50 frams per second and are synchronized to acquire frames in a time interleaved fashion.

The two cameras are mounted side-by-side to minimize the distance of the sensors. Still, there is an offset and a parallax between the viewpoints. Here, I present an algorithm that solves the problem to fuse the two camera streams into a single consistent stream of pose estimations at a rate of 100 Hz.

The first step is to run a human pose estimation model on each camera frame regardless of which camera it comes from. Here, blazepose is used, but movenet would also work. These models do not have a memory, so they treat each frame completely for its own. The result is a stream of pose estimations that jump back and forth between the two viewpoints.

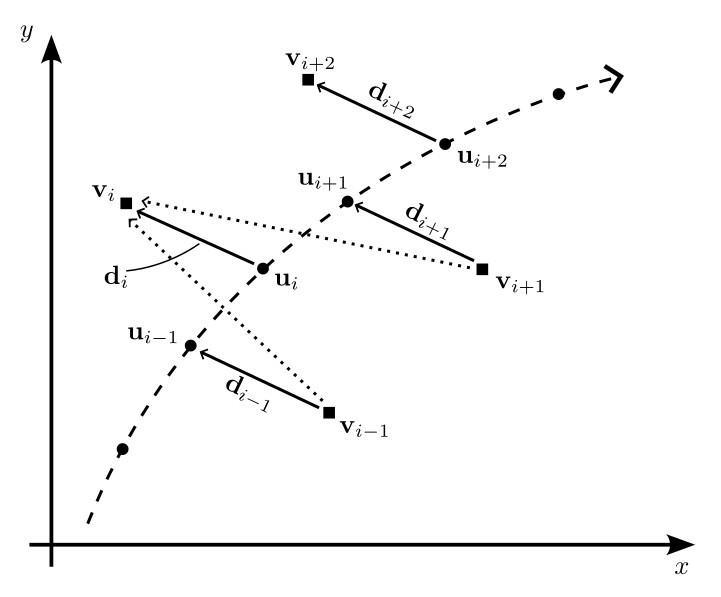

The situation is shown in figure 1 for a single keypoint. For each time point i there is a true position u of the keypoint, the true positions forming a trajectory (dashed line). The observed positions v can be modeled by adding a displacement vector d, that can be different for each time point.

Figure 1: Model

To allow the sensor fusion, we assume that d varies only slowly. In other words, if the keypoint does not move, we assume a constant d and if the keypoint moves, d may change, but it does not change abruptly. With this assumption, the following update rules can be used to find estimates d_hat for the time varying displacement d and u_hat for the true positions of the keypoint u.

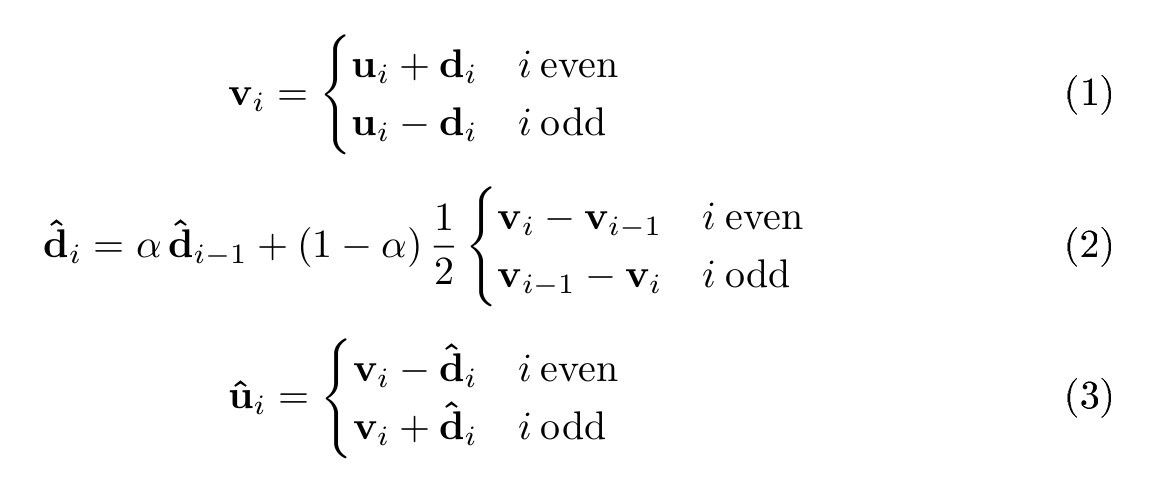

Figure 2: Formulas

The central idea is to use a running average of the difference between consecutive observations v (dotted vectors) to find d_hat (formula 2). alpha controls the amount of averaging and was set to 0.8 for the example videos. d_hat can be initialized from a single observation v at the very beginning of the measurement.

Video 1 shows the behaviour of the sensor fusion algorithm for a single keypoint. The pose estimations for the two different camera viewpoints are drawn in green, forming two separate trajectories. After activating the sensor fusion algorithm, the fused trajectory is drawn in red.

Video 1: Explanation

In the video, the original rectangular frames were cropped to a quadratic shape so that they can be used as input to blazepose. By cropping the frames from the two cameras differently, the offset could be further reduced (not shown).

In conclusion, this sensor fusion strategy relies strongly on the performance of the pose estimation network, that does the heavy lifting of interpreting the different camera viewpoints in a consistent way. It is much easier to fuse the keypoint vectors than the original frames.

Finally, video 2 shows a demonstration of the use of the highrate_longexp camera together with the described algorithm in the lalelu_drums application for playing a bossa rhythm.

Video 2: Bossa rhythm

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.