Let me explain. Something very subtle is going on here. First things first, if you know what I mean. Life is more than just a linked list, or a doubly linked list, or some other abstraction based on some kind of tree. Yet, as stated earlier, while working on Atari Genetics, I feel like I need to do a bunch of Lisp-related stuff, like create a bunch of really abstract things like functions, whether THEY are abstract or not - with names like "bind keysets" and so on. So, as a debugging exercise, I decided to put some work into getting "yet another version of Eliza working again".

Well, then I decided to do something else. Of course, the code is broken, but that's not the point., or maybe that is the point, because sometimes something weird and wonderful happens. Like, I just realized that instead of searching for a keyword in the user input and then selecting a canned response, why not identify all keywords in the input, and then prepare a set of responses, and then put them into some kind of priority queue, sort of like a DJ playlist editor. So, this is going to be a departure from the original Eliza code on the one hand, while it can nonetheless eventually be made backward compatible with any files that might conform to the original algorithm.

It seems simple enough. It is never that simple, of course. Yet it is arguably parallelizable and extendable, and therefore well worth considering not only how one might get it to work on a bare 6502. but so also on a CUDA platform of course, since that seems so obvious from the point of view of what I figured out about what I also want to do with the Atari-Genetics stuff.

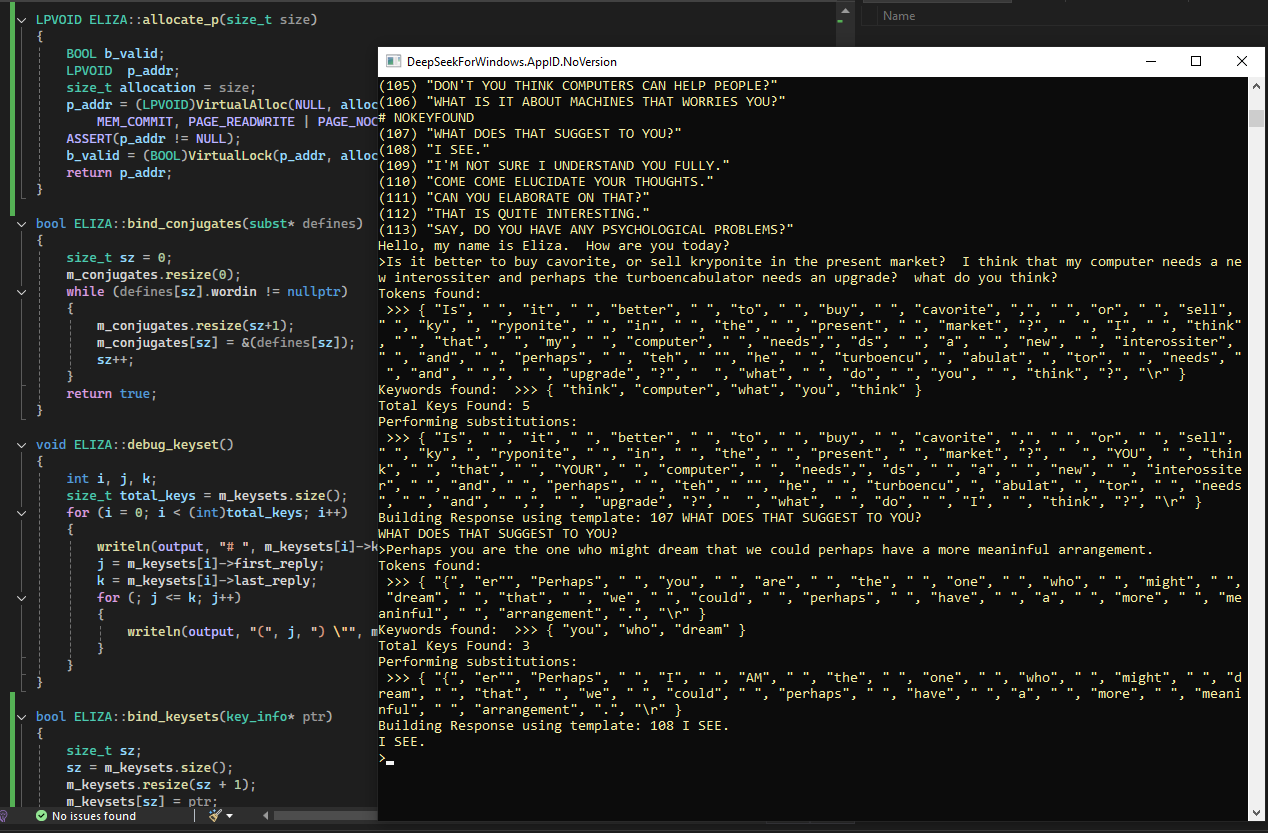

Yet when I asked Eliza, "Is it better to buy cavorite or sell kryptonite in the present market? I think that my computer needs a new interossiter, and perhaps the turboencabulator needs an upgrade. What do you think?" Well, the tokenizer did exactly what it was supposed to do, and that is when it responded with the debug message:

Keywords found: >>> { "think", "computer", "what", "you", "think" }

Total Keys Found: 5

So far so good. So, I threw some more stream-of-consciousness stuff at it, even if it is "just Eliza", and not quite up to doing my priority queue stuff yet, and thus upon asking of it "Perhaps you are the one who might dream that we could perhaps have a more meaningful arrangement.", knowing of course that all I would get is a generic response since the priority queue and playlist manager is not working yet, but oh what fun when Eliza said this:

Keywords found: >>> { "you", "who", "dream" }

Total Keys Found: 3

So now we have eight keys that we haven't done anything with, even if there is something almost Freudian about my own reaction to what it is saying, like if we put all of the unreacted keywords together:

THINK COMPUTER - WHAT YOU THINK - YOU WHO DREAM.

I see an interesting use case for transformers here, despite the crazy simplicity of this sort of thing. There is another subtle change to the code base that I should mention, just as in Atari-Genetics when I call "bind_dataset", which is a very simple abstraction using a method call to assign a value to a function pointer, or something similar, so as to make the main method more generic, the same technique can be used instead in the Eliza code to separate the "narrative" part of the program from the algorithm itself, thus encapsulating access to the fact that in the current version of Eliza the "narrative" is initially stored in a collection of pointers to char, etc., which were previously accessed through extern pointers, declared wherever.

char* narrative::get_response(int i)

{

char *result;

result = replies[i];

return result;

}

size_t narrative::get_response_count()

{

size_t sz = 0;

char *ptr = replies[sz];

while (ptr != nullptr);

{

ptr = replies[sz];

sz++;

}

return sz;

}

subst *narrative::get_conjugates()

{

subst *result = &(conjugates[0]);

return result;

}

key_info *narrative::get_keywords()

{

key_info *result = &(keywords[0]);

return result;

}

Simple use of a namespace, which might later be promoted to a class does the trick:

namespace narrative

{

size_t get_response_count();

char *get_response(int i);

subst *get_conjugates();

key_info *get_keywords();

}

Allowing for this little bit of fun in the C++ constructor:

ELIZA::ELIZA()

{

int j;

size_t total_keys = 0;

size_t total_replies = 0;

size_t responses;

j = 0;

m_keywords = narrative::get_keywords();

subst *conjugates = narrative::get_conjugates();

bind_conjugates(conjugates);

m_keysets.reserve(64);

m_keysets.resize(0);

while (m_keywords[total_keys].key_str != NULL)

{

responses = m_keywords[total_keys].responses;

if (responses != -1)

{

j = (int)total_replies;

total_replies += responses;

}

m_keywords[total_keys].first_reply = j;

m_keywords[total_keys].current_reply = j;

m_keywords[total_keys].last_reply = (int)total_replies - 1;

bind_keysets (&m_keywords[total_keys]);

total_keys++;

}

n1 = (int)total_keys-1;

bind_responses (total_replies);

defaultKey = (int)total_replies-1;

// bTreeType<pstring<256> >* ptr = make_tree(replies);

}

The full source files will be on Git, eventually. Obvious, I have some bugs that I want to work out, but I hope that I am on the right track with this whole parallel to the "Mixture of Experts" model, where there is going to turn out to be a VERY generic approach to a whole bunch of stuff, and where there are therefore a number of algorithms that will find good use, not just with the new super sexy AI stuff, but so also with some very traditional ways of doing things.

glgorman

glgorman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.