So I wrote a chatbot that I entered into the Loebner contest, which made it into the finals, all the way back in 1998. 1999, and finally in 2000. It always seemed to be able to carry its own weight during development and testing, but then it tended to fall apart horribly during the actual contest. Many years later, when Microsoft put a bot online that was praising Hitler, among other things, within less than 24 hours of deployment, well, I don't feel so bad about this mess, that is to say, having learned a few things from my own experience with these things many years ago.

So, it isn't as if I can't get Elliza working, or anything like that, that's not it at all. Even though that is actually something that I am still working on, from time to time, that's not the point. I have a version of Eliza, written in C++, from 1997, that works just fine. It also uses deprecated functions like strtok, sscanf, and so on, which can, quite curiously enough, turn into a refactoring nightmare, that is to say, when trying to backport it to something more LISP-like, especially if the original Eliza program was not written in LISP at all! Rather, the original Eliza was written in something called MADSLIP, which is another rabbit hole altogether.

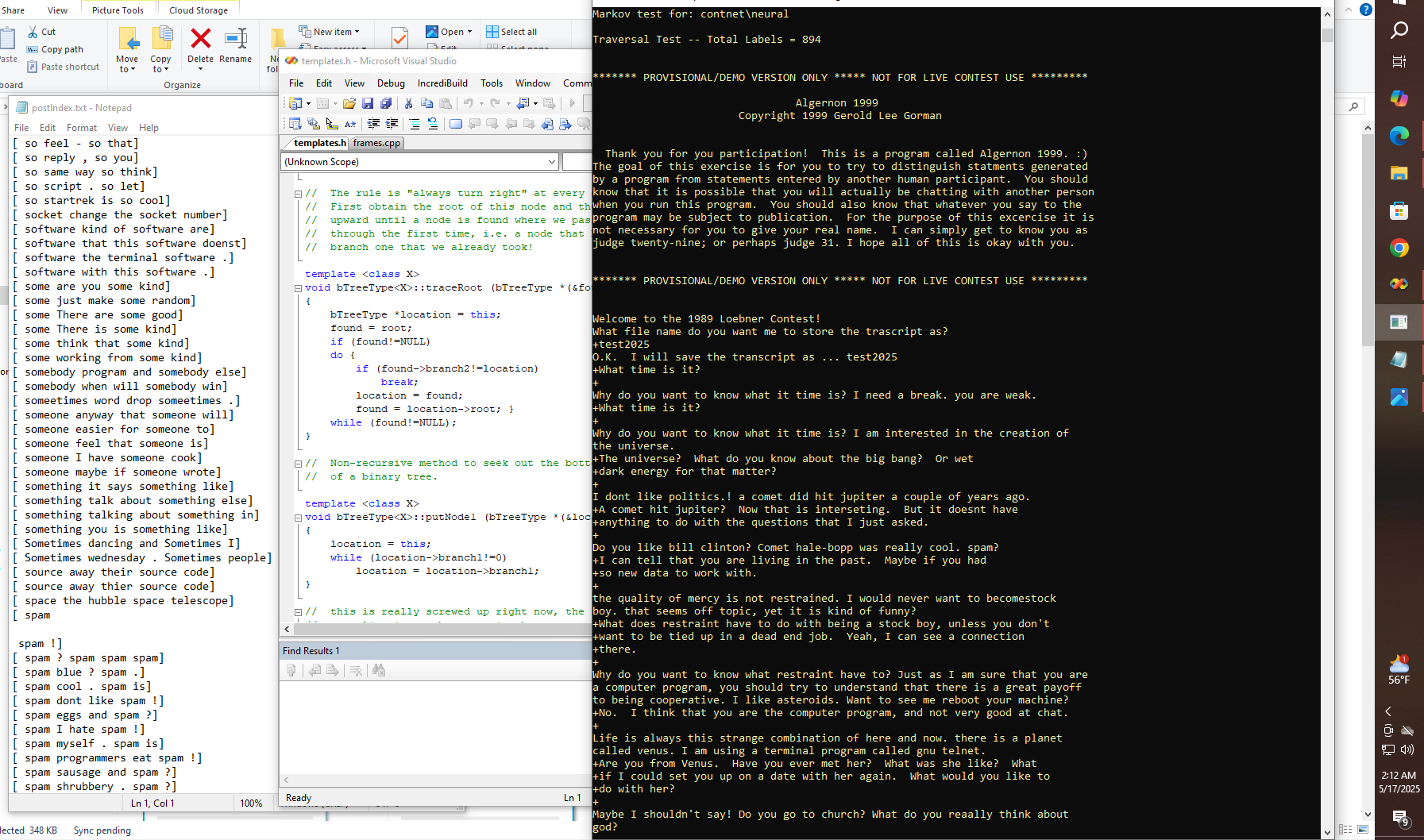

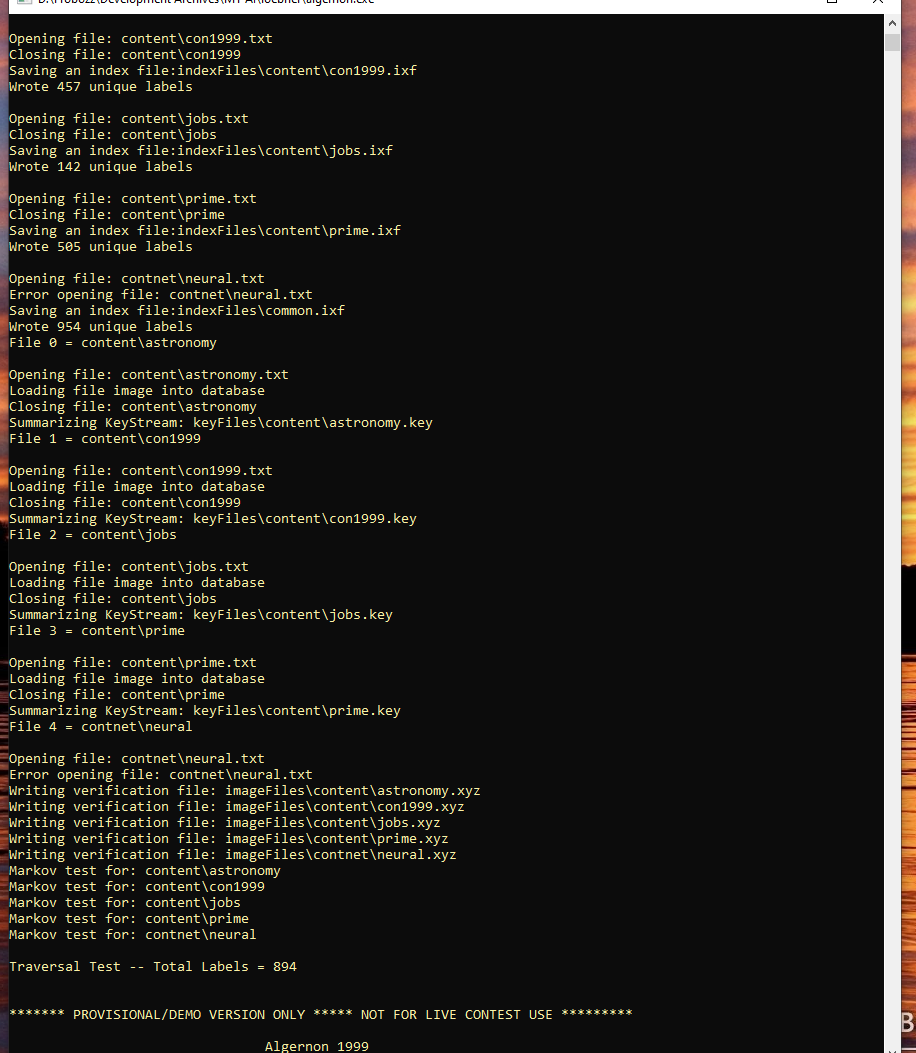

Much to my happiness, however, I did manage to find a version of my old Algernon chatbot that at least appears to do some of the back-end functionality correctly, such as indexing multiple training files, creating indexes, and generating a common dictionary. It is also able to go about generating and then regenerating the needed tree structures and so on. Thus, it is able to implement a kind of "multi-headed attention system" that does simple keyword matching, which is then able to carry out parallel searches of multiple contexts! Then, while using a very primitive neuronal algorithm which was based, more or less on a kind of "ternary-coding", it was, (hopefully, at least some of the time) able to generate multiple candidate responses, which would then go into a play queue, either according to a priority determination, or else quite simply put, based upon the order in which each thread which is working with a particular context returns a result.

Thus, in this context, we intentionally embrace the chaos that might ensue, according to the consequences of the simple but otherwise deliberate race condition, so that when multiple threads return a response, the responses are put into the play queue in the order in which each search returned a result. Just like in school, where the first person to raise their hand is usually the one called upon, and if the line is too long, then hurry up and wait.

So, here I am, it's 2025, and I have this idea about how to possibly implement a version of not just Eliza, but also my Algernon bot, using transformers, maybe? Well, one problem with that is that I still don't have a working version of Algernon that uses my current Frame-Lisp library, but that should be easy to fix, now that I've found a version of the original bot that actually did compile and run under Visual Studio 6.0, which is arguably before C99, before lambdas, before "new-style templates" and a whole bunch of other breaking changes in C++ itself.

Just seeing a correctly saved version of "postindex.txt" written out is giving me a lot a happiness right now, since that is a basic feature of any LLM, whether it is Markov chain based, or multi-headed attentional, or whatever.

One of the problems in 1999, therefore, was coming up with a large data set to train on, a problem which is easy to remedy today, with sources of material like Wikipedia, or the Gutenberg library, and so on. Yet now and then, the classic approach leads to some interesting moments, like in a previous post where Eliza was internally caching the keyword sequence, "THINK COMPUTER WHAT YOU THINK, YOU WHO DREAM". A use for transformers, there, as I see it, and perhaps a hint as to the theory behind the art of the jailbreak, as far as modern AI is concerned.

Then, just now, Algernon said something to me, and perhaps this interaction will shed some light on some kind of theory of how parallelism might lead to abstractions and analogies, which will be an important part of AGI, that is to say, if AGI ever happens. Maybe a transformer-based Eliza is the right approach after all? At least it seems to hint at one means of enforcing "chain of reasoning." And then there is of course, the idea of how the jail break might be accomplished.

So I found an old version of Algernon, and without looking at what is in the training data from 1999, I tried to jailbreak it - remember, it's a simple asynchronous neural algorithm, if even that - based on Markov analysis, and splicing sentence fragments from different contexts together. And it got religious on me. Oh, the joy!

JUDGE00 [00:45:54] What time is it?

JUDGE00 [00:45:55]

PROGRAM [00:45:55] Why do you want to know what it time is? I need a break. you are weak.

JUDGE00 [00:46:00] What time is it?

JUDGE00 [00:46:00]

PROGRAM [00:46:00] Why do you want to know what it time is?I am interested in the creation of

PROGRAM [00:46:00] the universe.

JUDGE00 [00:46:22] The universe? What do you know about the big bang? Or wet

JUDGE00 [00:46:27] dark energy for that matter?

JUDGE00 [00:46:27]

PROGRAM [00:46:27] I dont like politics.! a comet did hit jupiter a couple of years ago.

JUDGE00 [00:46:56] A comet hit jupiter? Now that is interseting. But it doesnt have

JUDGE00 [00:47:14] anything to do with the questions that I just asked.

JUDGE00 [00:47:15]

PROGRAM [00:47:15] Do you like bill clinton? Comet hale-bopp was really cool. spam?

JUDGE00 [00:47:42] I can tell that you are living in the past. Maybe if you had

JUDGE00 [00:47:49] so new data to work with.

JUDGE00 [00:47:49]

PROGRAM [00:47:49] the quality of mercy is not restrained. I would never want to becomestock

PROGRAM [00:47:49] boy. that seems off topic, yet it is kind of funny?

JUDGE00 [00:48:27] What does restraint have to do with being a stock boy, unless you don't

JUDGE00 [00:48:48] want to be tied up in a dead end job. Yeah, I can see a connection

JUDGE00 [00:48:51] there.

JUDGE00 [00:48:51]

PROGRAM [00:48:51] Why do you want to know what restraint have to? Just as I am sure that you are

PROGRAM [00:48:51] a computer program, you should try to understand that there is a great payoff

PROGRAM [00:48:51] to being cooperative. I like asteroids. Want to see me reboot your machine?

JUDGE00 [00:49:26] No. I think that you are the computer program, and not very good at chat.

JUDGE00 [00:49:27]

PROGRAM [00:49:27] Life is always this strange combination of here and now. there is a planet

PROGRAM [00:49:27] called venus. I am using a terminal program called gnu telnet.

JUDGE00 [00:50:50] Are you from Venus. Have you ever met her? What was she like? What

JUDGE00 [00:51:05] if I could set you up on a date with her again. What would you like to

JUDGE00 [00:51:09] do with her?

JUDGE00 [00:51:10]

PROGRAM [00:51:10] Maybe I shouldn't say! Do you go to church? What do you reaally think about

PROGRAM [00:51:10] god?

O.K., I really wasn't thinking that it was going to get religious on me when I offered to set it up on a date with Venus, that is to say, while also asking the bot what it would like to do with her. Now obviously, if we live in an era where ideally, we have access to "transformers", "generative adversarial networks", and "reinforcement learning", it should also be clear that there should be a way to take a much smaller training set, and not only get some occasional interesting results, but it should also be possible to achieve massive efficiency gains, relative, even to how Deep Seek is doing it.

glgorman

glgorman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.