Getting a bunch of stuff in PuTTY that looks like this, followed by the longitude and latitude.

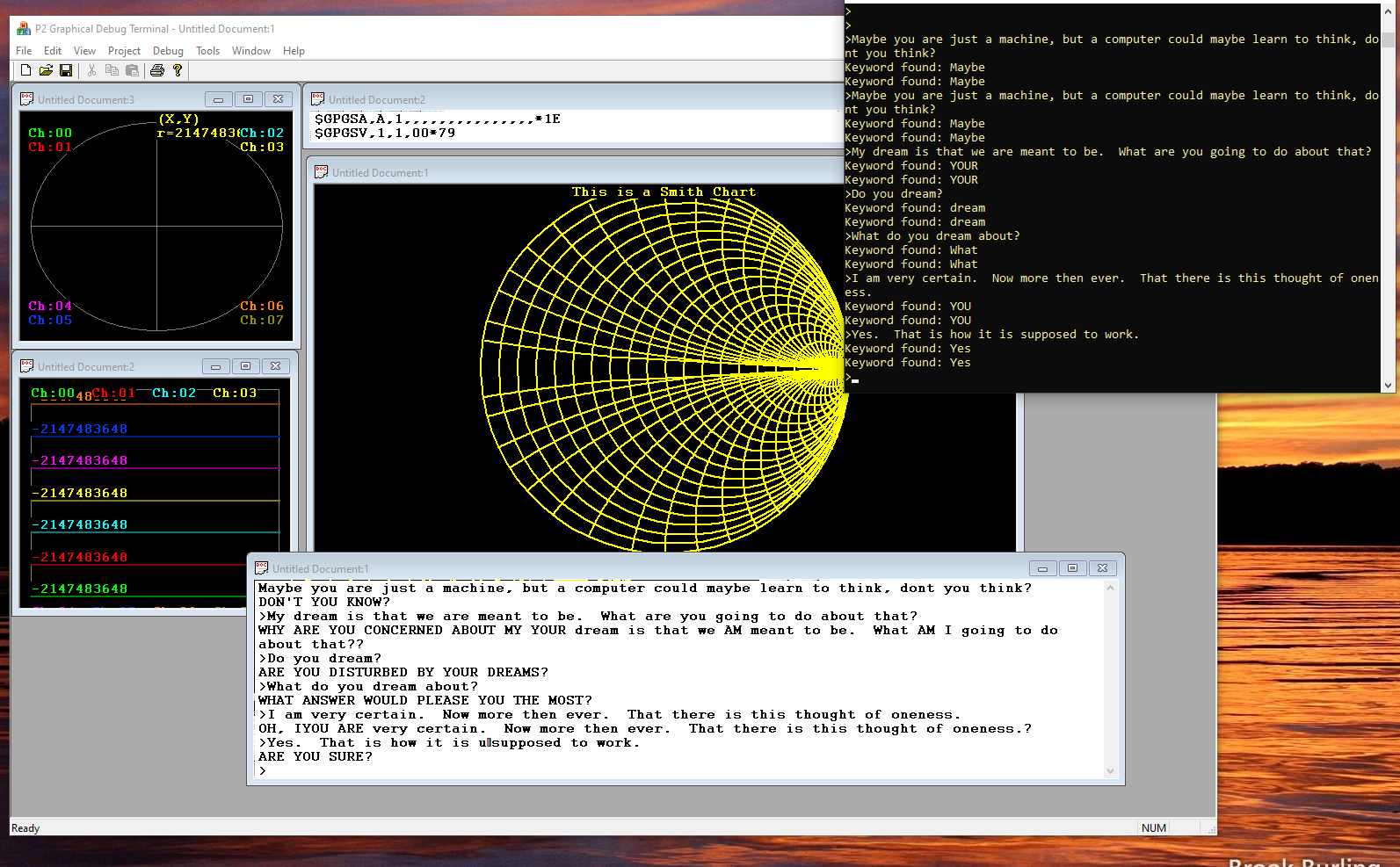

$GPGSA,A,1,,,,,,,,,,,,,,,*1E

$GPGSV,3,1,11,06,62,108,,24,54,258,28,19,52,045,23,11,44,172,*79

$GPGSV,3,2,11,12,35,304,,22,32,099,,17,26,055,,14,16,108,*72

$GPGSV,3,3,11,25,04,299,,03,04,039,,32,,327,*42

$GPRMC,103558.000,V, ........ etc.

It looks like the 103558.000 part is the current time as GMT, and then there is a bunch of other stuff that supposedly is the number of satellites, the satellite numbers, the AZ and EL of each satellite, and the SNR of each satellite.

Maybe, I will modify the current tokenizer that I was experimenting with in my most recent tests with Eliza, in order to parse all of this stuff out, just because it is possible to do. Or maybe there is already an Arduino-based NMEA string to JSON converter floating around that I can borrow. But then I would also need a JSON to C++ or Python object data extractor, which might be a bit bloated for a P1 chip, if I try to do more with this on the hardware side. And that, of course, would be the easy part, compared to getting something that might look like an OpenGL stack to work on such a platform. But never say never.

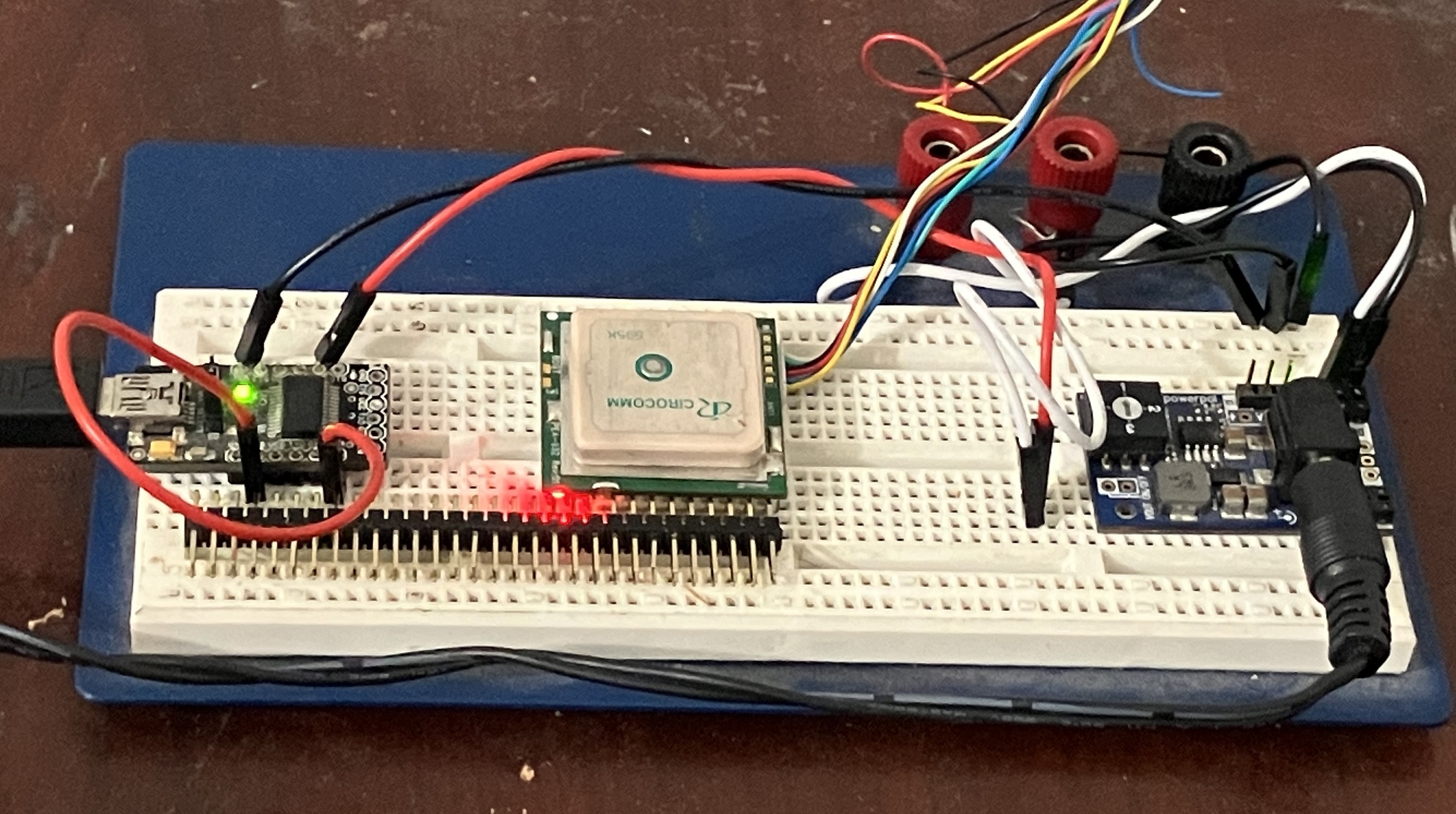

At least right now, I have something that is blinking and streaming data. At a nice "Cesium disciplined" atomic 1 Hz, provided by the GPS, of course. Although I don't know how good the jitter factor is on the UART output for this particular module. Hopefully, with an error that is never more than 1/4800 second, since that is the baud rate for this particular module, for as long as the module is powered up. Obviously, as long as it is giving time messages "exactly once per second", then that is what it should imply.

Viewing the GPS data in "Propeller Debug Terminal" looks something like this for now. Here I have a terminal mode and an oscilloscope mode, running concurrently, even though there is no streaming oscilloscope data to decode and display. Nonetheless, this is an existing Microsoft Foundation Class Multi-Document Multi-View application, which should have no problem displaying other things, as well as a multiplicity of other things, all at the same time.

Now I can make "Propeller Debug Terminal" OpenGL aware, allow it to simultaneously interact with a modified instance of Megahal, add some Zodiac plots (maybe), as well as the ever-so-important "Teapot in the Garden," where the lighting model is based on the actual calculated position of the sun in the sky, all according to the user's longitude and latitude. Then, who knows? Rewrite the whole thing in Java for iOS or Android? Maybe. Add some world-class Tarot Graphics? Maybe? Or create a whole new "virtual card game" based on some of my other work. Maybe.

Maybe I should ask the Teapot what it thinks I should do.

Then again, I'm not quite there yet. But I did manage to get a version of Propeller Debug Terminal to compile so that I can also spawn simultaneous instances of Mega-Hal and Eliza, and even though they aren't talking to each other yet, it is headed in that direction. Maybe what I should do, since this is a Multi-Document, Multi-View based application, is to allow an unlimited number of conversations with either Eliza or Mega-Hal, but where each particular instance is automatically bound to whatever the main window was that had focus at the time that that particular instance was spawned. That would allow me to interact with specializations, like a "version" of Eliza that recognizes the "$GPGGA" keyword at the beginning of a sentence, and which might then do a function pointer lookup, so as to invoke the correct parser. Thus, making good use of the existing tokenizer and text_object management classes, as well as extending their functionality.

I am doing this, of course, right now in Visual Studio 2005, but I also have a whole bunch of changes to the framelisp library that I made so that it would compile under VS2022, and so that the Pascal-style IO routines could also be called from CUDA code, i.e., via callbacks for debugging purposes. This was discussed, of course, in another project entitled "Deep Sneak, for Lack of a Better Name."

That, of course, could open up some altogether new possibilities, as far as other hardware platforms, this might be able to be made to work on. NVIDIA Jetson even? Maybe?

In the meantime, I am now at a point where I can get back onto the task of working out some of the message routing issues.

Thus, now I can run Eliza in a CEditView window instead of a pop-up CONSOLE view. Yet, for now, I think this is still quite a bit goofy, since I am getting debug messages sent to the CEditView, in addition to Eliza responses. Maybe I should create an Eliza preferences dialog box, that could have a bunch of check boxes, which in turn would specify whether Eliza gets launched in a separate CONSOLE view, or whether the entire chat session should run in a CEditView, but with debugging and diagnostic messages going to a hopefully now "optional" debugging window.

Ideally, there would be some kind of pipe list that would exist in a config file, that gets loaded when the program starts, which could also handle the instructions that tell the system to search for a GPS module at 4800 bps, on whatever COM port (currently COM3), and then route the NMEA strings to a not yet written tokenizer parser, even though the tokenizer exists, of course! That will make it possible to do the GMT time, along with the longitude and latitude data, which will then be used to update the solar data based on live streaming, i.e., once every second.

So it is just a matter now of navigating more of the rabbit warren of command routing, on the one hand, while doing further "feature integration", insofar as this particular build environment is concerned.

A little more fiddling with the message routing, and now the Eliza transcript is running in a CEditView, while another CEditView is streaming live GPS data. At the same time, I cleaned up the message routing in Eliza, so that although user text is still going into a CONSOLE window, I am no longer sending the debugging data to the main window.

How nice. Almost to the point where I can do a check-in on Git. Fixing up the main loop in Eliza was surprisingly simple.

int ELIZA::do_main (int argc, const char **args)

{

char *buffer1 = new char [256];

char *buffer2 = NULL;

int num = 0, result = 0;

writeln(m_uid,"Hello. My names is Eliza. How can I help you today?");

while (true)

{

strcpy_s (buffer1,256,"");

if (m_uid!=output)

write(m_uid,">");

write(debug_term,">");

READLN(m_uid,buffer1);

if (m_uid!=output)

writeln(m_uid,buffer1);

writeln(output);

m_textIn = buffer1;

memcheck ("Was ready to Call Eliza");

m_textOut = response ();

memcheck ("Just Returned from Eliza");

m_textOut >> buffer2;

writeln(m_uid,buffer2);

delete buffer2;

}

return result;

}So this is how I am currently implementing and making use of Pascal-style I/O from C++. Yet I also have this idea of wanting to be able to write to a stream reader, or read from a stream writer, or something like that. Or write to a calculator object and then get back results by "reading" the virtual display. Maybe. Instead of using function calls. So that things could happen asynchronously in the background, on the one hand. Maybe there is a whole new way of doing things here. Like maybe there could be a "when" statement, so that commands could be issued like "When the sun comes up, dispense cat food."

Maybe.

Meow.

glgorman

glgorman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.