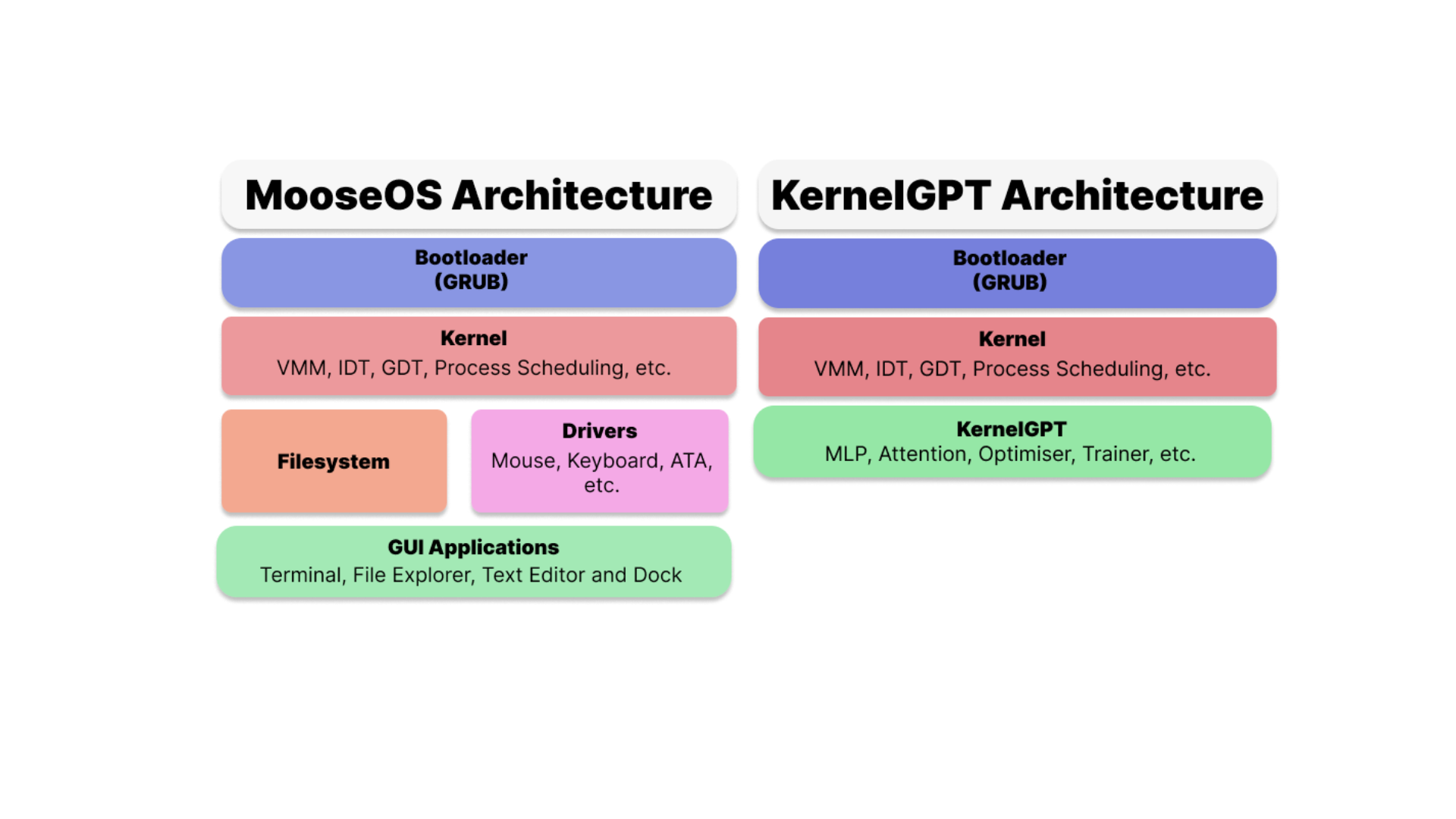

KernelGPT takes MooseOS's source code, and removes unneeded functions like filesystems, ATA support, GUI, etc, leaving only a mostly bare-bones kernel. KernelGPT sits directly inside the kernel.

Caption: A (slightly oversimplified) description of MooseOS vs KernelGPT architecture.

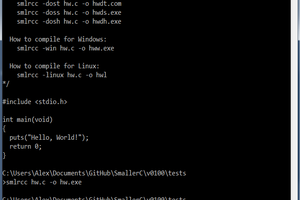

Inspiration for the LLM design choice was taken from Andrej Karpathy's MicroGPT. This meant implementation for a GPT-2 style LLM, while keeping Karpathy's choice of switching Layernorm for RMSNorm and GELU for ReLU. To merge the AI into MooseOS, KernelGPT uses an arena allocator for easy memory allocation, and initialises the FPU for floating-point arithmetic.

MicroGPT’s `names.txt` was converted into a C header before runtime so KernelGPT can access the vocabulary directly in memory, avoiding any filesystem dependencies.”

Extra Links/Credits

I built MooseOS. You can view

- the repo

- the Hackaday article about it

Andrej Karpathy built MicroGPT. You can find his Github Gist here

Ethan Zhang

Ethan Zhang

Martin

Martin

Brandon

Brandon

Adam Guilmet

Adam Guilmet

Alexey Frunze

Alexey Frunze