Project Details: Samuroid Technical Deep-Dive

1. Project Vision

SamuRoid is an advanced 22-DOF bipedal humanoid platform designed for the intersection of Embodied AI and Real-time Bipedal Locomotion. By bridging Large Language Models (LLMs) like DeepSeek with the ROS (Robot Operating System) ecosystem, Samuroid transforms high-level semantic intent into precise physical movements.

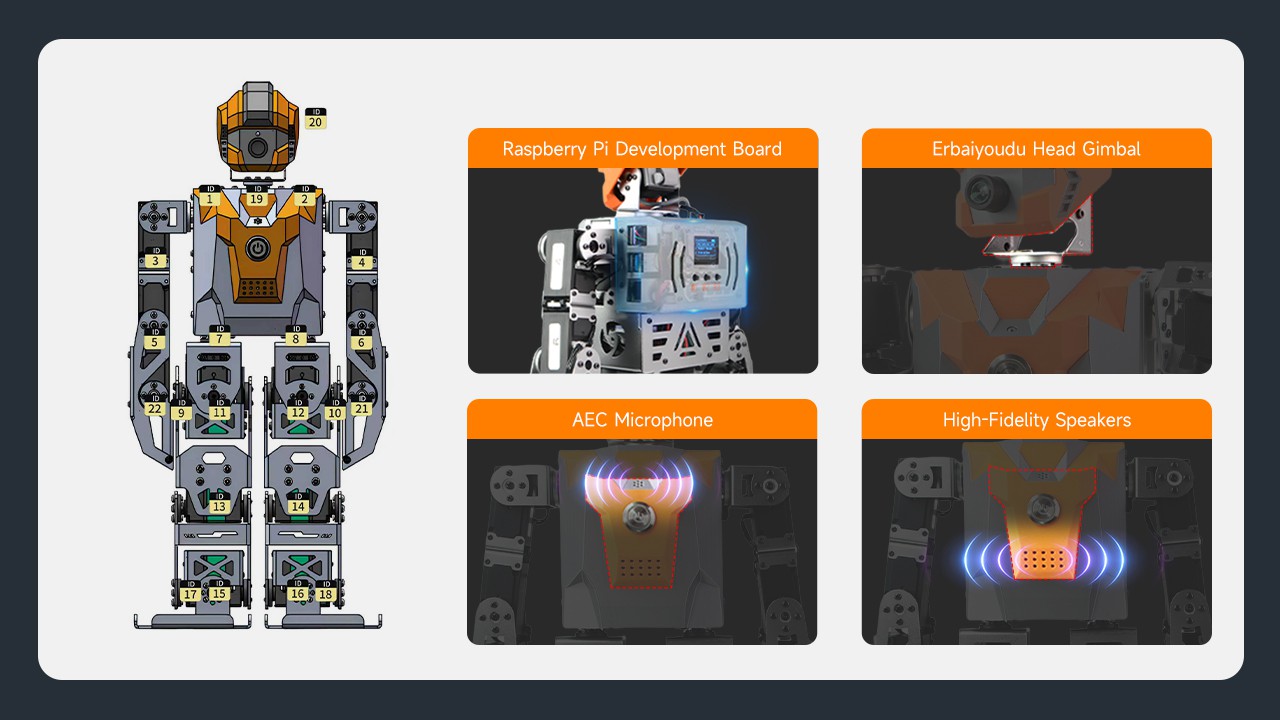

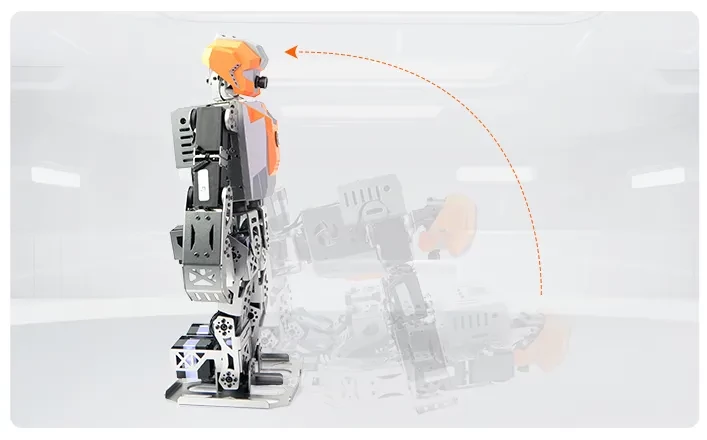

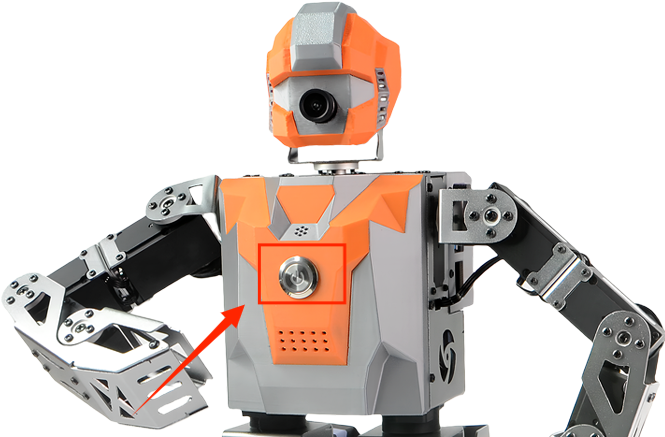

2. Mechanical Design & Kinematics

The chassis is constructed from high-strength Aluminum Alloy with a static spray finish, ensuring structural rigidity for dynamic balancing.

- Degrees of Freedom (22 DOF) Breakdown:

- Head: 2 DOF (Pan/Tilt for vision tracking)

- Shoulders/Arms: 2 DOF (Shoulder) + 4 DOF (Arms) + 2 DOF (Hands)

- Lower Body: 10 DOF (Legs) + 2 DOF (Feet)

- Actuation: Powered by XRS300 High-Voltage Serial Bus Servos.

- Stall Torque: ≥30kgf.cm @ 12V.

- Feedback: Real-time position, temperature, and load monitoring via the serial protocol.

3. Electronic Architecture & Sensing

The system adopts a master-slave control architecture to balance high-level AI reasoning and low-level motor control.

- Compute Brain: Raspberry Pi 4B (4GB RAM) running Ubuntu 18.04.

- Motion Controller: PWR.ROSBOT.X dedicated driver board. It handles DC-DC power management (SY8120ABC), audio amplification, and acts as a hardware abstraction layer for the 22 servos.

- Sensing Suite:

- IMU: MPU6050 6-axis gyroscope for attitude estimation and gait stabilization.

- Vision: 1080P 120° Wide-angle USB camera (Robot-Eye 4.0).

- Audio: High-precision AEC (Acoustic Echo Cancellation) Microphone + 1W Speaker for voice interaction.

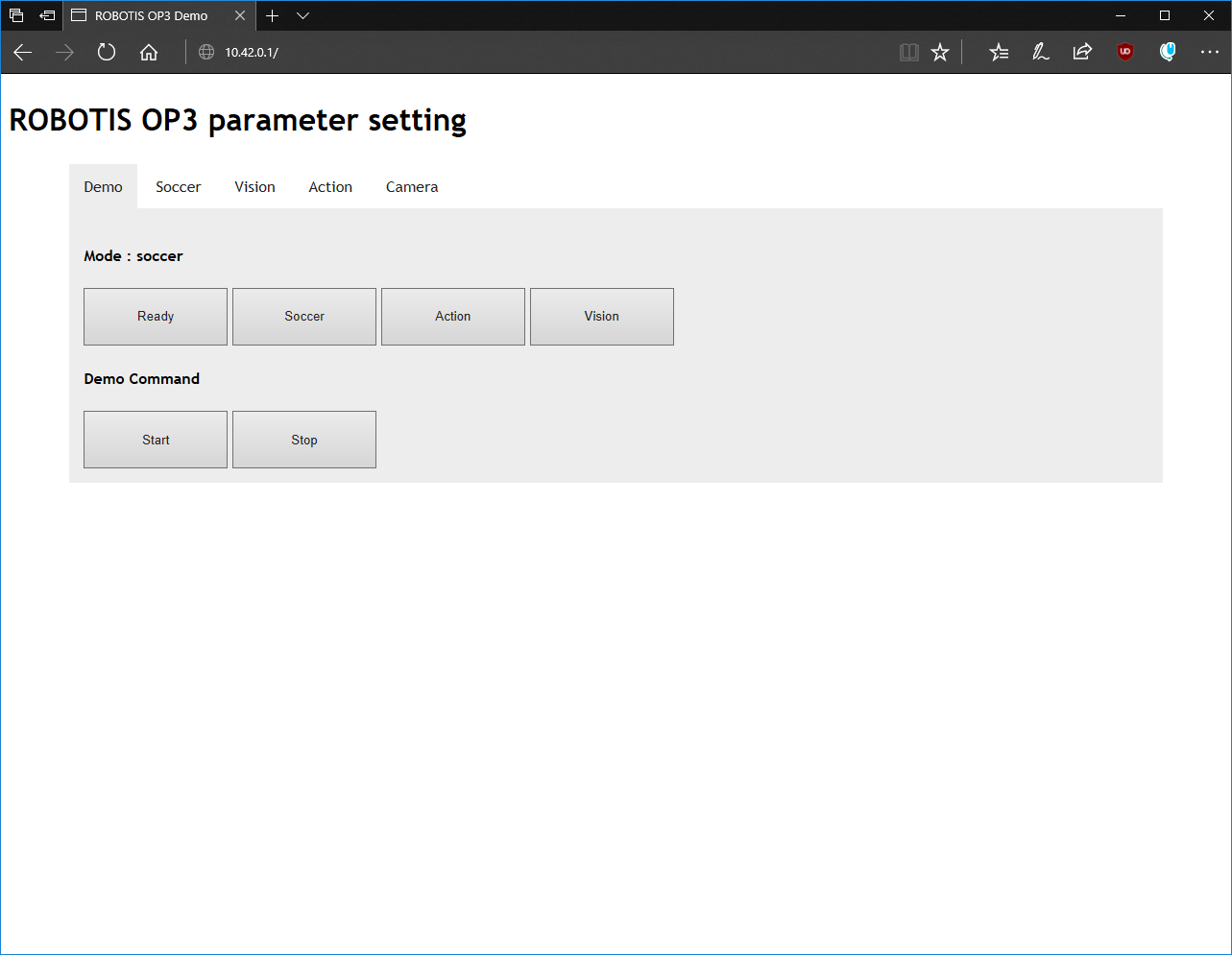

4. Software Stack & Locomotion Algorithms

SamuRoid is fully integrated with ROS Melodic. The codebase is open-source and supports both C++ and Python development.

- Locomotion Engine: Implementation of Inverse Kinematics (IK) combined with the Linear Inverted Pendulum Model (LIPM). This ensures the Center of Mass (CoM) remains stable during dynamic gait transitions.

- Vision Pipeline: OpenCV-based modules for face recognition, color tracking, and QR code localization.

![]()

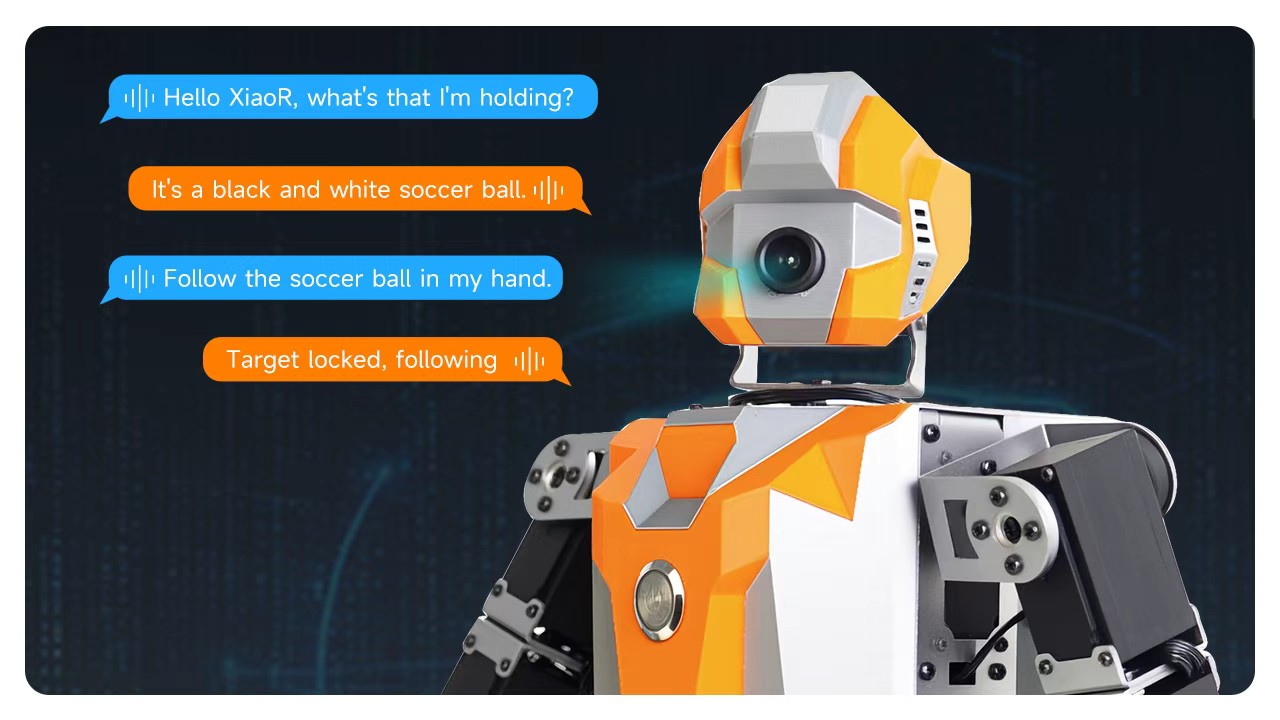

5. Embodied AI: Integrating LLMs

The defining feature of Samuroid is its Multimodal AI Integration.

By connecting to DeepSeek and Doubao LLM APIs, the robot performs semantic parsing of natural language. Instead of hard-coded commands, the robot can understand intent:

- Input: "I am tired, show me some fun."

- Process: LLM interprets "tired" -> selects "Dance" action group -> triggers ROS Action Server.

- Feedback: Real-time status report via the integrated voice system.

6. Technical Specifications Summary

- Dimensions: 190.98 * 141.6 * 389.81 mm

- Weight: 2.3 kg

- Battery: 12V 3000mAh Li-po (60A discharge protection)

- Communication: Dual-band WiFi (2.4G/5G), Bluetooth 5.0, PS2 Wireless Controller.

alisa.wu

alisa.wu

Gaultier Lecaillon

Gaultier Lecaillon

Joshua Gruenstein

Joshua Gruenstein

Augusto

Augusto