If you have been around these parts long enough, you know how the classic chatbots like Eliza, Parry, and Megahal work. Then there were other important approaches to AI, like Perceptron's, SHRDLU, Mathematica, Watson, and so on. Some of those things have open source versions, and some do not. One thing that they all had, and perhaps still have in common, was, and perhaps there still is the fact that there always seems to be a "great breakthrough" in AI, which almost always seems as if it were as great of a leap forward, as it would be, that is to say, as if sustainable controlled fusion had also been solved. And then of course, no, not really. Better luck next time.

Then perhaps one of the biggest breakthroughs ever came with the landmark observation, "What if attention is all you really need?" Purely attentional-based systems, however they actually work however, have their own set of problems, and that is, of course, efficiency. And yet there are some very interesting things that can be said about "attention", in and of itself, so maybe a digression into the history of all of this will prove to be worthwhile.

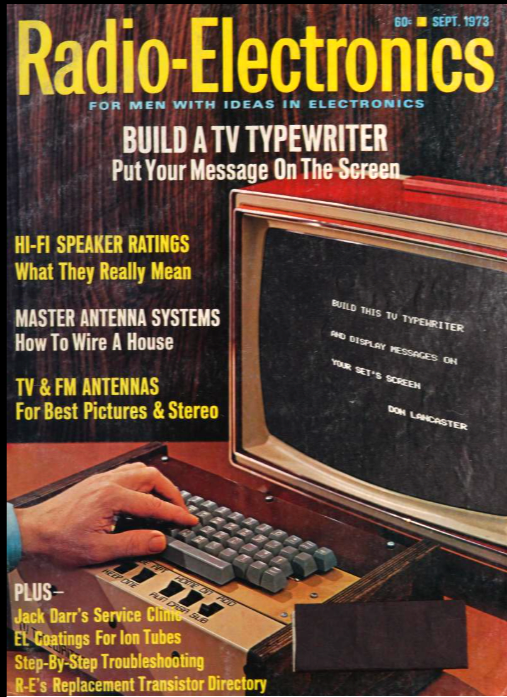

Most people reading this, of course, were not even born yet when Don Lancaster wrote the article for the very first build it yourself "TV Typewriter, as if all that mattered at the time was putting "your message on the screen."

glgorman

glgorman