Philip Koopman is a fantastic resource concerning everything safety, reliability, CRCs, checksums, about which he indeed wrote a book recently. And some FORTH of course. Never forget FORTH.

I contacted him about PEAC in October 2022, asking for help/validation of PEAC16x2. My article was in kiosks for more than a month already (and it's now in Creative Commons so you can read it online as you like). He could not help me, as he was busy with "work on autonomous vehicle safety".

Lately, looking back at his blog, I see that I missed a few things these last years, not just his book (which I'm very curious to get but I won't use Amazon).

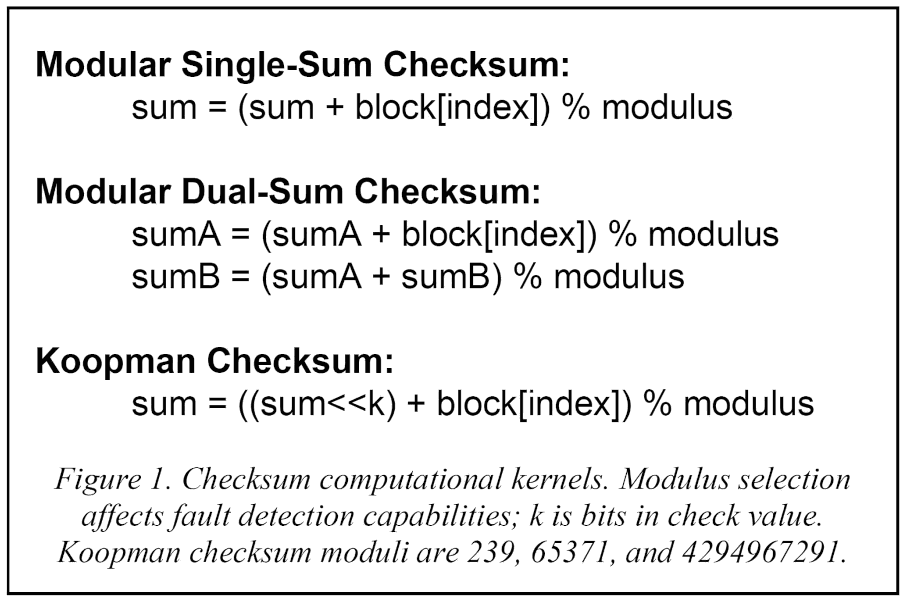

That's right: he has invented and released his own checksum, claiming better than HD3 (that is: detecting all error made of 1, 2 or 3 bits).

I'll have to find the value(s) of k.

No idea if he ever has somehow looked at my article but he published his own paper and the curves look nice.

https://arxiv.org/abs/2304.13496

http://checksumcrc.blogspot.com/2023/05/koopman-checksum.html

http://checksumcrc.blogspot.com/2024/02/comparative-speeds-for-different.html

http://checksumcrc.blogspot.com/2024/03/why-to-avoid-hash-algorithms-if-what.html

So what's the deal here?

From my perspective it looks like some Adler where the second register is replaced by a shift.

But WHY A SHIFT and not at least a rotation ? Part of the reason may be with the modulus which "recycles" the bits that would have been thrown away by the left shift. The k parameter alone might account for the HD1 bump compared to the "Modular Single-Sum Checksum" it is derived from. 65371 is a nice prime number with a good binary pattern.

Apart from providing a new class of algorithms that is somewhat better (HD-wise) than the classic Fletcher, Adler and such, without a crazy increase of computation time, I am more curious than blown away.

- Increasing from HD2 to HD3 is pretty nice but division is expensive. I'll have to check his implementation.

- The use of a large, single register might be a limitation. Remember that PEAC16x2 exceeds 2G states with only 16-bit registers. And leaving the whole register exposed to incoming data increases the risk of a crash.

- What about the "Adler defect" ? Using a modulus that is smaller than the data word creates ambiguity since two numbers could hash to the same result after the modulo. I wouldn't do that.

Now, to address the last concern, there is something else that is interesting: a parity mode. I reinvented it these last months but it seems new to me in the literature. Quoting:

· DualX-32P: This is DualX-32 with 15-bit modulus of 32749 and an additional parity computation. The parity computation boosts fault detection to HD=4 while still being faster than DualSum-32.

· Koopman-32P: This uses a 64-bit sum variable with a modulus of 0x7FFFFFED, processing the data word one 32-bit block at a time. This is slightly slower than Koopman-32 due to an extra XOR operation with an additional parity register inside the inner loop that is used to compute parity.

Going to HD4 is cool, at the expense of one data bit per word (or so, I'll have to double-check). Here, I'm doing (rediscovering) something similar by using the high bit (that should remain 0 even with random data) for an extra (out-of-band) data.

.

Now, let's not forget the different approaches and goals. Philip Koopman addresses the classic checksum case, where the data blocks are bare bytestreams that need to be preserved, in case of random access for examination. It could be in HW or SW.

In this project,

- the gPEAC acts both as a required scrambler and implicitly as a checksum, when some data words are agreed to be 0.

- There is no need to keep the data "intact" because scrambling is actually required for line balance and EMI.

- there is no need for a "fast software version" because it's running in HW/ASIC/FPGA only.

- The word size can be arbitrary, while classic checksum is limited to powers of two.

- With network errors, and particularly with MLT3 nibbles, a single line error event can create a very complex pattern of alteration, not just a flipped bit here and there.

- More importantly : Koopman works on "statistical" measures for long blocks but this projects asks for certainty of non-alteration. The receiver wants to be sure the latest received block is pristine, not "to what degree can I trust this? And what if more than 3 bits are flipped?"

So the parity bits play a big role here: sure they eat up some bandwidth (12.5% overhead) but they provide the desired certainty.

- whatever the number of error bits, it must leave a trace in the 36 bits of internal accumulators. Sooner or later, these changes percolate toward the MSB/parity and an error is flagged.

- If you want to erase/cancel this trace, you need more counter-values to correct the accumulators and this will leave more traces. Unlike CRC which is eager to be fooled.

- In the process, the C/D bit would be affected and trigger an error, too.

- Meanwhile, the GrayPar circuit adds HD4 on the "error" so it becomes even more difficult to achieve the correct pattern that will alter the data without detection at all.

- If errors are encountered, the protocol reduces the chunk size and the subsequent extra check words expose the whole gPEAC state.

So my design might appear over-engineered and very conservative but I want to achieve very high reliability despite cheap/subpar physical/analog circuits. Adding a checksum every kilobyte wouldn't cut it. And I don't know where Koopman's own rabbithole goes to, but I understand we diverge at some point.

Anyway, his design is quite funky :-)

Yann Guidon / YGDES

Yann Guidon / YGDES

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.