-

Hardware Updates

10/28/2023 at 19:27 • 0 commentsIt's been a long time because my studies are taking up my time but I still wanted to share some hardware updates from about a year ago.

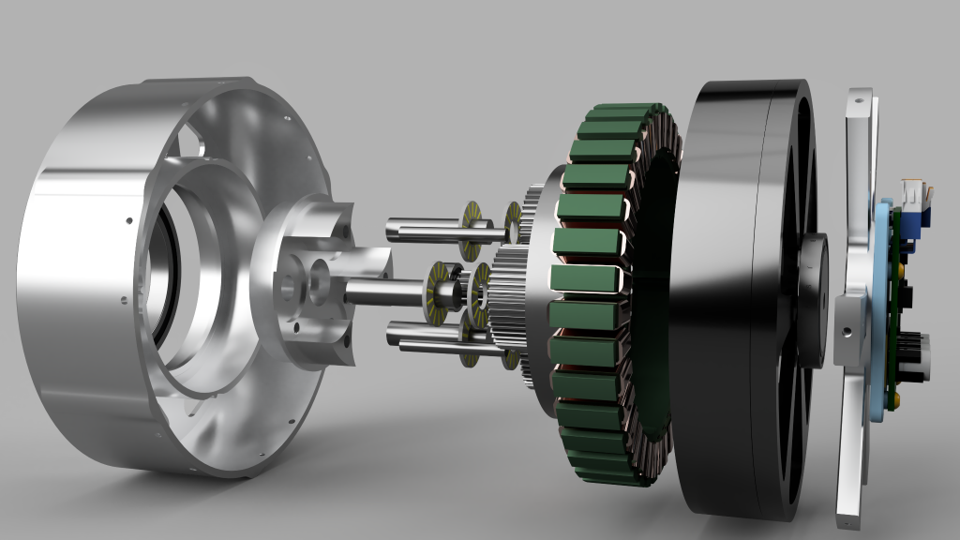

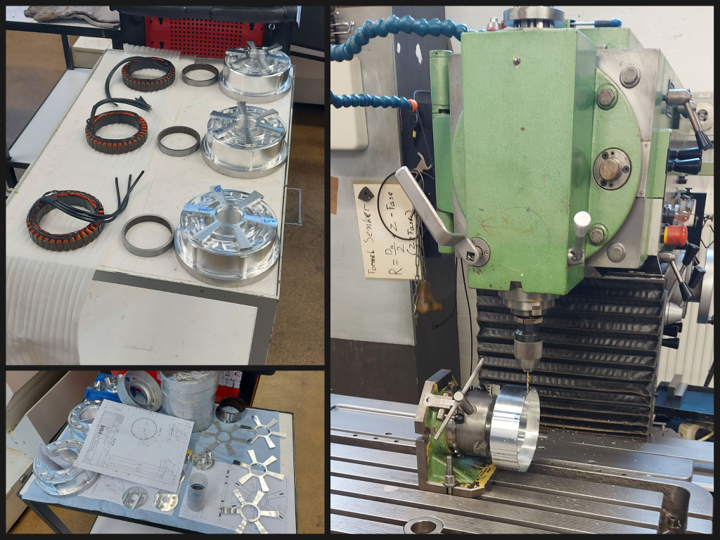

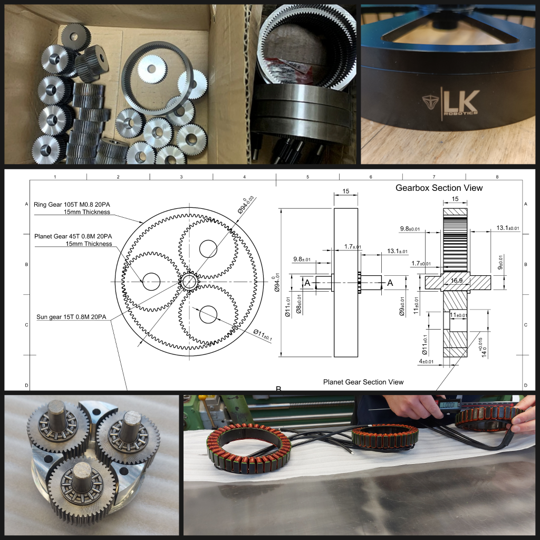

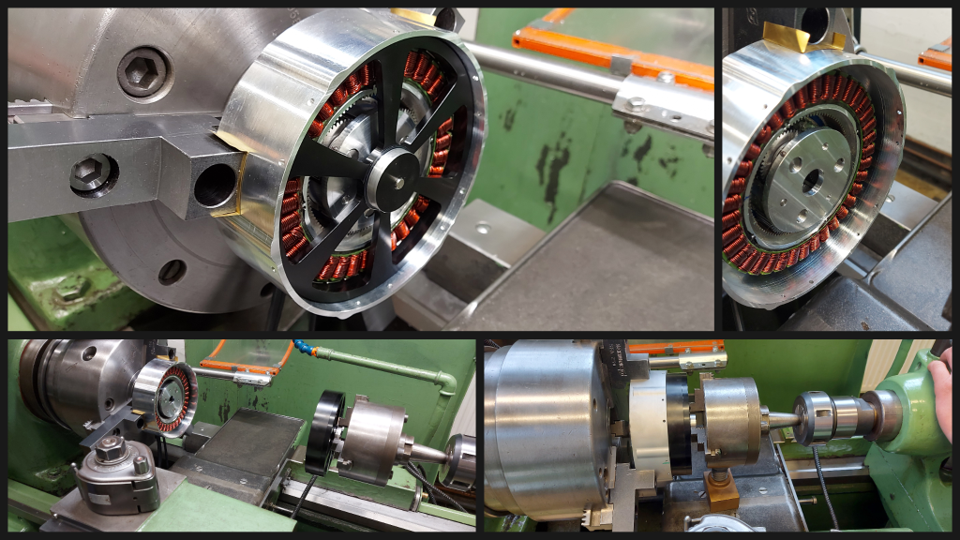

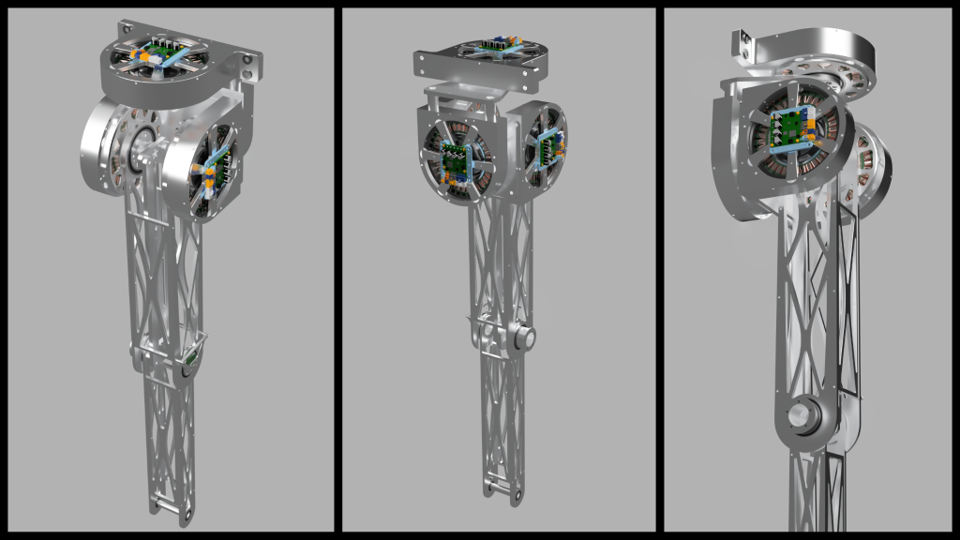

I started by planning, machining and assembling the actuator with the Institute of General Mechanics at RWTH Aachen:![]()

![]()

We had some fitment issues but after a few adjusted prototypes and shrink fits - and the parts finally arrived - the assembly could start:

![]()

For that, we cleverly used the lathe to lower the rotor into the stator/housing assembly, which worked surprisingly well after figuring out a solution to hold the rotor with the lathe.

![]()

![]()

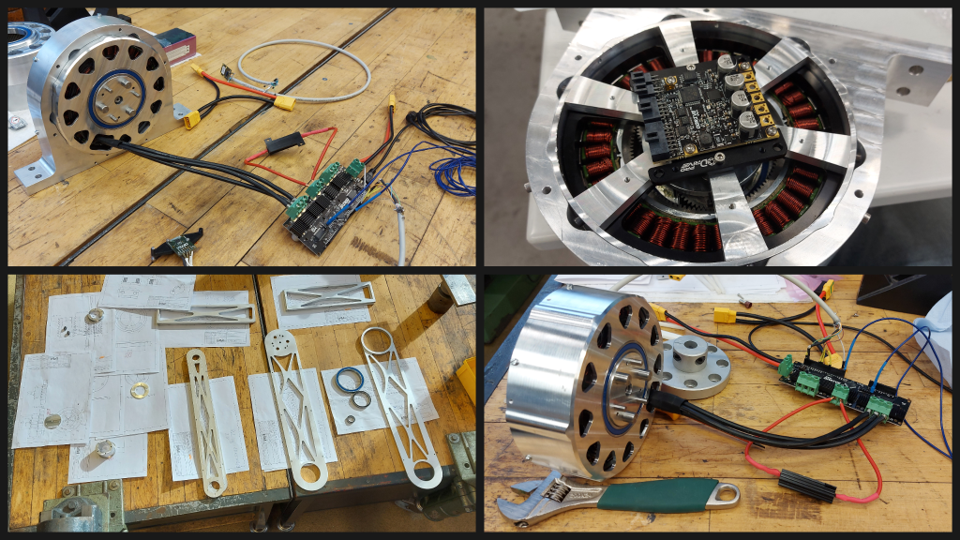

I used ODrive for servo control (as you can see whichever version I had available at the time because time was quite limited), and simultaneously machined the first leg parts (bottom left).

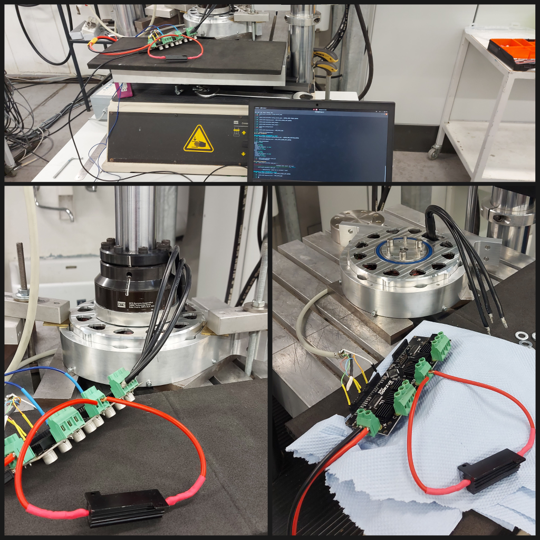

Keep in mind this was all done in about 3 weeks so I wasn't able to do everything perfectly. Below, you can see torque testing using a professional torque measuring jig that RWTH Aachen offered me. This allowed me to test torque commands vs. real measured torque, even under continuous rotation:![]()

I don't have any torque plots available unfortunately but I was able to test it with 125Nm commanded for a few seconds and it did reach that value without breaking. At least after fixing the connection between sun gear and rotor because it was slipping. I did also have some encoder issues though because I was still using ODrive v3.6.![]()

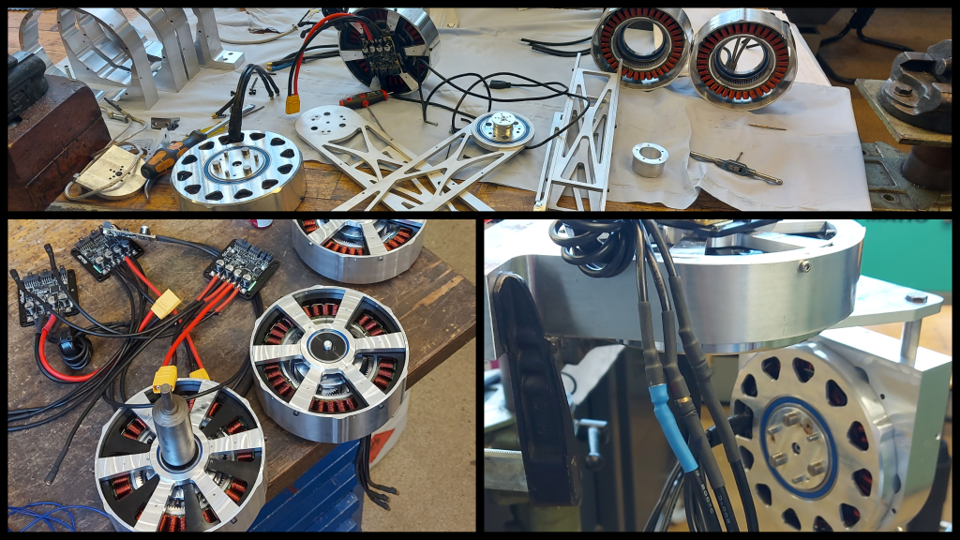

I didn't have much time to test things so I quickly moved on to building a few more actuators and assembling the leg. Just as another disclaimer, from this point on I have increasingly less detailed videos and pictures because time was *really* limited.

![]()

This was the current plan for a first prototype, simplified but still roughly the same degrees of freedom. I wanted to test impedance control on one leg and find out how many things I had to chance to make it work as well as it did in the simulation.

![]()

And this is it in real-life, I had to replace the planned cable-driven joint for the knee with a linkage last-minute because it was causing issues. Of course, I also had to implement a software correction for the knee angles as you can see in this video:

After a few days of troubleshooting the backlash, friction and software problems seen above, I got the same controller used in the simulation tests to move the leg in a controlled manner:

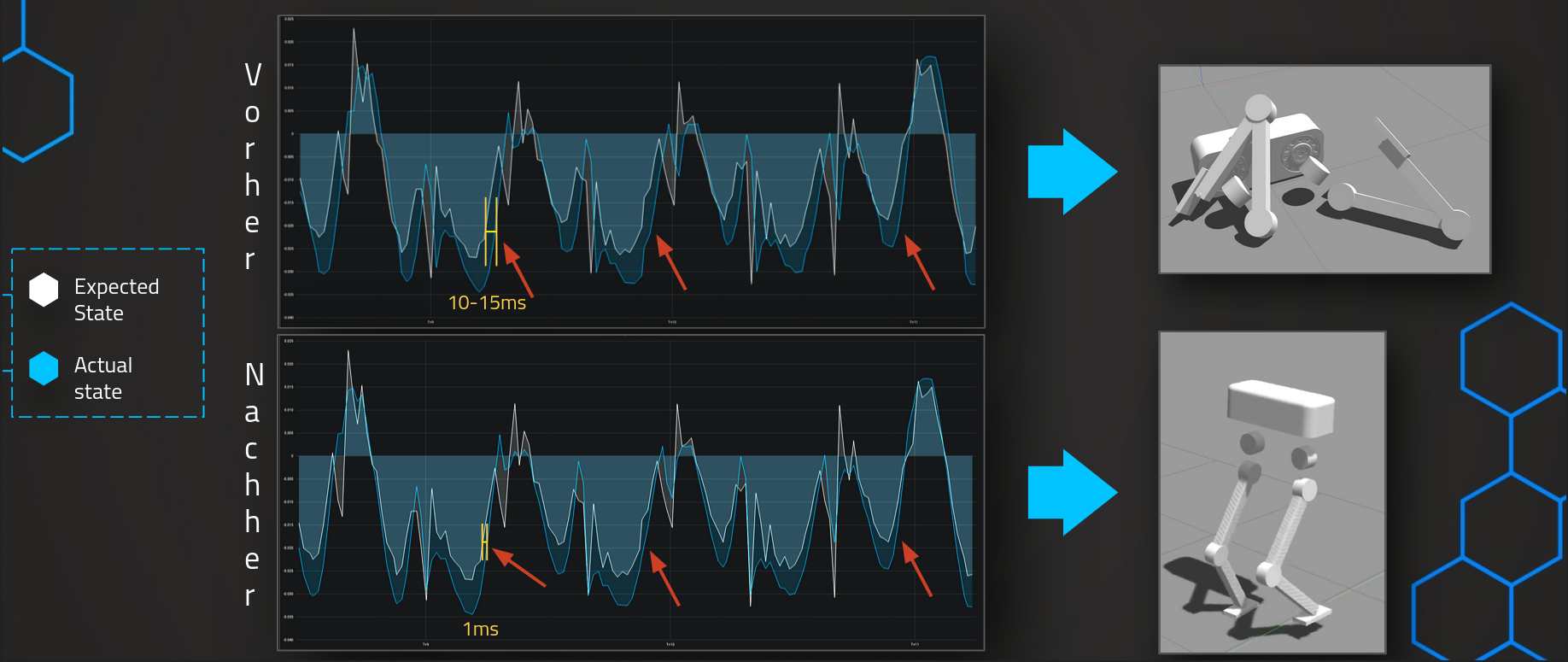

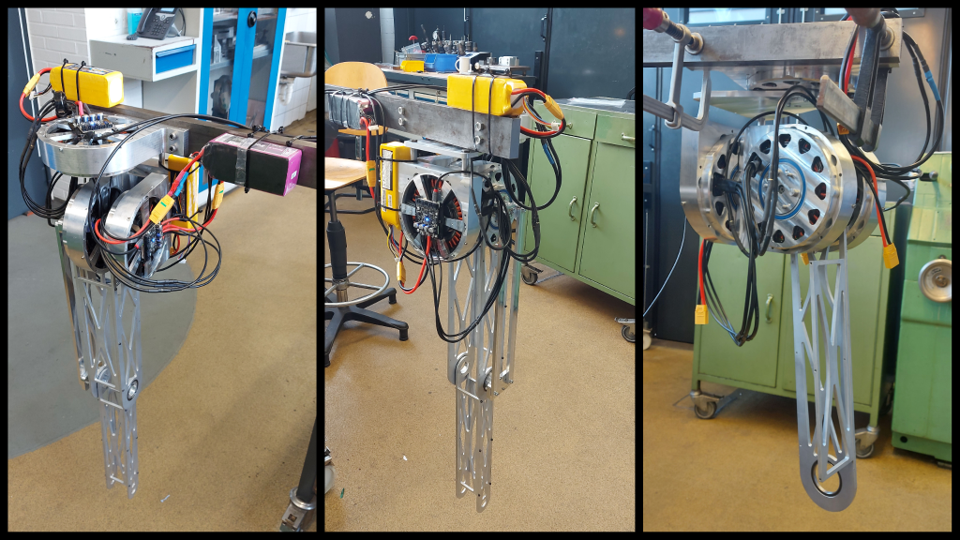

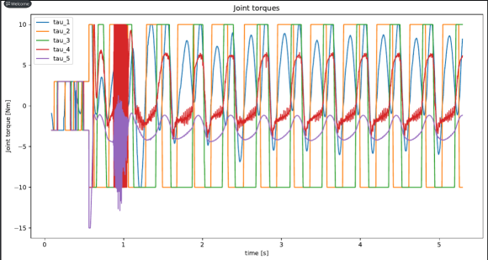

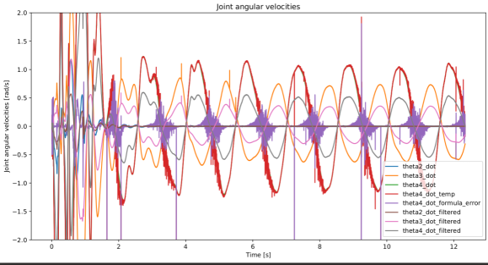

Below are the only plots I could find, just to make it a bit more scientific :)

![]()

![]()

This is everything I have so far because the exams were coming up. But next would be refining a lot of mechanical issues I only patched up due while trying to get it to work, so a few mechanical redesigns and and then testing the impedance control more stable. After that I will build another leg and slowly assemble the full robot.

-

Controlling the robot in real-time via a Web UI

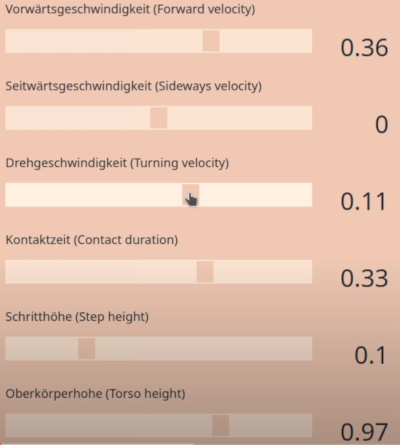

09/26/2021 at 22:27 • 0 commentsShortly after starting the project, I was already envisioning being able to control the robot in real-time via various input methods, one being a Web UI.

After figuring out one bug after the other, be it when turning in place, stepping sideways or walking circles, I started working out the details about how to implement such a Web UI. Thanks again to Daniel Berndt for helping me out with the HTML + JS implementation!

![]()

The basic idea is to have a VPS acting as a webserver for both user and robot. This VPS serves the website and syncs the slider values across clients, which was obviously important to prevent confusion. It also handles the WebRTC screen sharing, which I use to show a live view of the robot on the website.

The web UI clients communicate via a websocket and the VPS sends a number of desired states to the robot controller at regular intervals via multiple TCP sockets.

The controller parses those JSON objects using nlohmann/json and interpolates between desired value from web UI and internal reference states for the MPC to prevent abrupt value changes. Especially contact duration was incredibly sensitive to on-the-fly changes. This worked relatively well during first experiments even without the interpolation, the client side was the most time-demanding.

What happens when you give users a web UI to control a robot in a simulation, what's invevitably going to happen? Correct, someone is going to break it. Since the simulation is also quite sensitive, I spent a lot of time on a functional remote reset mechanism, i.e. being able to press a button in the web UI and having everything return to nominal. Unfortunately, the simulation really didn't like the various ways I had tried, numerical errors everywhere, leading the robot to explode without any other issues.So I ended up using a janky way of killing simulation and controller, restarting both and moving the mouse to the "Start Screen Share Button" in the browser. I was under a lot of time pressure so forgive me for this solution :)

It was a lot of fun showing to family and friends, so definitely worth it!

Here is the related Github issue: https://github.com/LouKordos/walking_controller/issues/33

-

Fixing the robots' marathon performance

09/24/2021 at 10:51 • 0 commentsIt was always important for me to test if the robot can walk indefinitely - or at least for hours on end - in the simulation, so after I got the robot walking for a few seconds, I kept trying longer runs to see what failed and how it failed. At some point, I got it to walk for about an hour, but then it would suddenly fail in very weird ways:

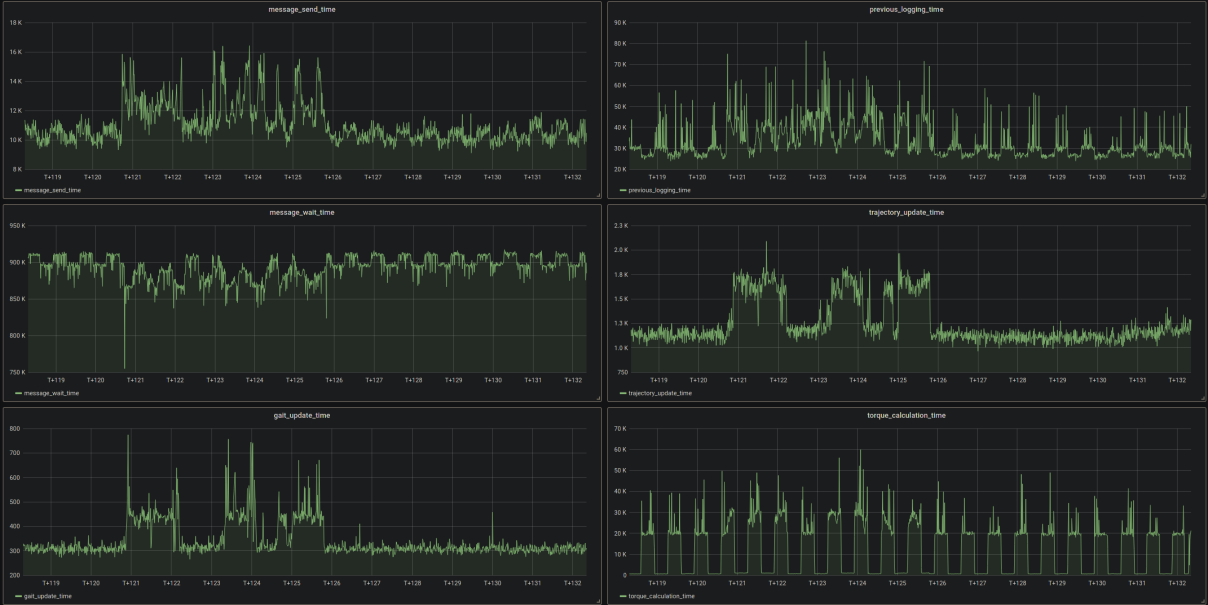

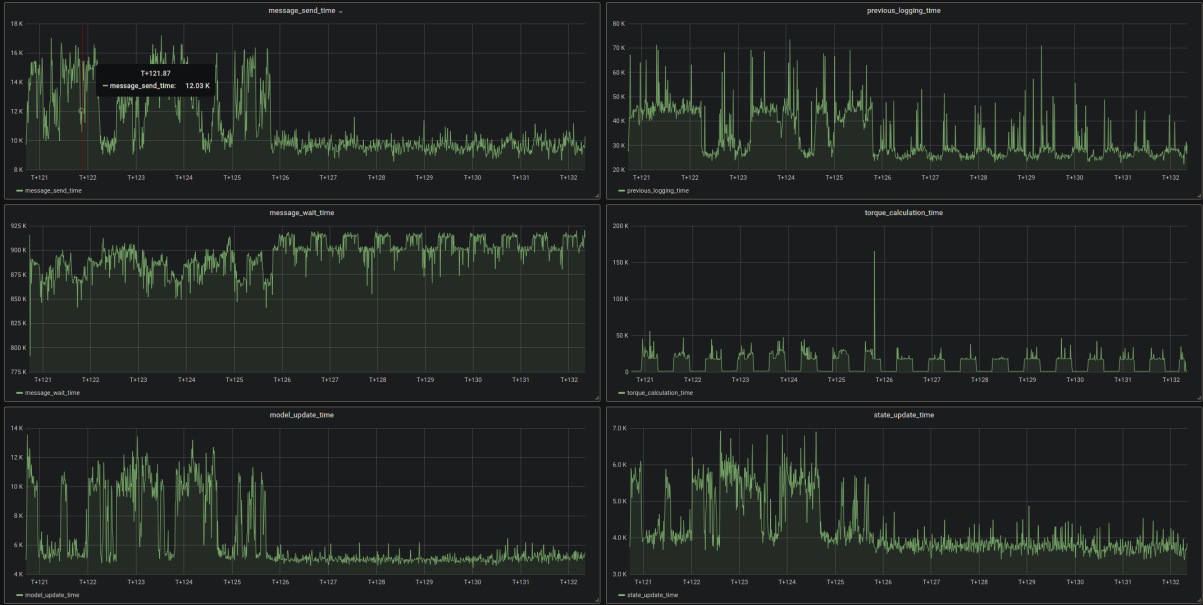

What's more, it was failing at random points in time, sometimes after a few minutes, sometimes after over an hour... That lead me to believe it was either multithreading related (deadlocks etc.) or something at the OS level. After reverting to a number of commits and experimenting with wrapper functions to make everything fully threadsafe (which was long overdue anyway), I was quite certain threads were not the problem. So I started adding high_resolution_clocks to each code block on every thread and logged all that into the CSV files:

![]()

![]()

![]()

Maybe you already noticed: There's a clear spike of "previous_logging_time", meaning the write operation must have taken over 250ms! This made a lot of sense, and even though I haven't tracked down why that operation randomly took so much longer, I suspected it was just something on the hardware cache or OS level and moved on to the proper solution, which was, of course, asynchronous IO. The implementation is not very clean because I wasn't perfectly sure that was the actual issue but I used this in the end:// From https://stackoverflow.com/questions/21126950/asynchronously-writing-to-a-file-in-c-unix#21127776 #ifndef ASYNC_LOGGER_H #define ASYNC_LOGGER_H #include <condition_variable> #include <fstream> #include <mutex> #include <queue> #include <streambuf> #include <string> #include <thread> #include <vector> struct async_buf : std::streambuf { std::ofstream out; std::mutex mutex; std::condition_variable condition; std::queue<std::vector<char>> queue; std::vector<char> buffer; bool done; std::thread thread; void worker() { bool local_done(false); std::vector<char> buf; while (!local_done) { { std::unique_lock<std::mutex> guard(this->mutex); this->condition.wait(guard, [this](){ return !this->queue.empty() || this->done; }); if (!this->queue.empty()) { buf.swap(queue.front()); queue.pop(); } local_done = this->queue.empty() && this->done; } if (!buf.empty()) { out.write(buf.data(), std::streamsize(buf.size())); buf.clear(); } } out.flush(); } public: async_buf(std::string const& name) : out(name) , buffer(128) , done(false) , thread(&async_buf::worker, this) { this->setp(this->buffer.data(), this->buffer.data() + this->buffer.size() - 1); } ~async_buf() { this->sync(); std::cout << "Async logger destructor ran" << std::endl; std::unique_lock<std::mutex>(this->mutex), (this->done = true); this->condition.notify_one(); this->thread.join(); } int overflow(int c) { if (c != std::char_traits<char>::eof()) { *this->pptr() = std::char_traits<char>::to_char_type(c); this->pbump(1); } return this->sync() != -1 ? std::char_traits<char>::not_eof(c): std::char_traits<char>::eof(); } int sync() { if (this->pbase() != this->pptr()) { this->buffer.resize(std::size_t(this->pptr() - this->pbase())); { std::unique_lock<std::mutex> guard(this->mutex); this->queue.push(std::move(this->buffer)); } this->condition.notify_one(); this->buffer = std::vector<char>(128); this->setp(this->buffer.data(), this->buffer.data() + this->buffer.size() - 1); } return 0; } }; #endifThe file: https://github.com/LouKordos/walking_controller/blob/develop/src/include/async_logger.hpp

Many thanks to the Stackoverflow fellow :)

You can also read about this issue on my Github repo where you will see I also checked if the solver time was spiking instead of the loop around it, maybe because the cartesian position values became increasingly large, but that was not the case:

![]()

After fixing this issue with async IO, the robot was able to walk for over 4 hours, after which the limits of the simulation started to show... More on that in another log :)

-

The first major issue: Delay Compensation

09/24/2021 at 10:34 • 0 commentsAfter I had fully implemented MPC in a Jupyter Notebook using CasADi and the point mass state space model, I wanted to test the same model in the physics-based simulation environment Gazebo, using the Bullet physics engine.

To do that, I basically rewrote the Jupyter Notebook in C++, and generated a file describing the formulated optimization problem using the CasADi export feature.

I also made sure that the structure of my software is fully modular, meaning the controller runs completely separated from Gazebo and only receives state information at regular intervals, after which it processes this state and sends back a control action, more precisely torque commands for each joint of the robot. They currently communicate via a local UDP connection, which I will change to UNIX sockets in the near future.

This allows me to switch back and forth from simulation and real robot without changing anything about the controller, which was very important to me.

So after I finished the Gazebo Plugin that sends state messages via a UDP socket and implemented a basic version of the MPC in C++, I tested the controller using a simple floating torso in Gazebo and used the API to directly apply forces in the world frame for debugging purposes.

However, I quickly realized something was wrong because the torso kept "flying away" in the simulation, indicating wrong forces were being applied by the controller.

This went on for a while, and I started logging every value I could think of to a CSV file. Then, I initially used GNU Octave to plot the CSV values, and after looking at some very conclusive plots

![Image]() ... I started logging both controller side and simulation side, suspecting the controller is working with too old state information. And there it was!

... I started logging both controller side and simulation side, suspecting the controller is working with too old state information. And there it was!![]()

There was a clear difference of about 15ms between latest logged simulation state and the state the controller was using for the MPC calculations, which meant outdated forces were sent to Gazebo and the controller was "lagging behind" by about one time step. No wonder it was constantly exploding in the simulation.After trying a few approaches of fixing the problem, I settled on simply stepping the discretized model by one time step and using that "compensated" state for further MPC calculations: See here

// Step the model one timestep and use the resulting state as the initial state for the solver. This compensates for the roughly 1 sample delay due to the solver time P_param.block<n,1>(0, 0) = step_discrete_model(x_t, u_t, r_x_left, r_x_right, r_y_left, r_y_right, r_z_left, r_z_right);This resulted in the floating torso being stabilized in Gazebo, which was a great first step! I do have to mention, though, that a few other bugs were fixed while trying to find the root cause, so stuff like a few sign errors were probably also part of the cause :)

REDACTED - The First Fully Open Bipedal Robot

TLDR: REDACTED is the first bipedal robot with an actual fully open-source software AND hardware stack.

Loukas K.

Loukas K.

... I started logging both controller side and simulation side, suspecting the controller is working with too old state information. And there it was!

... I started logging both controller side and simulation side, suspecting the controller is working with too old state information. And there it was!