-

1Wire your robot

Follow the wiring diagram below to wire together your Raspberry Pi, buck converter, USB camera, motors, motor driver, ultrasonic sensors, and battery:![]()

The Creepsy robot uses an assembled SCUTTLE Rover base with some modifications: Creepsy does not use the camera that came with the SCUTTLE Rover because the cable is not long enough to allow the camera to be attached to the top of the robot. Additionally, Creepsy also does not use the encoders or the batteries that come with the kit. These changes are reflected in the wiring diagram.

One thing that the diagram does not reflect is the speaker. Add the USB speaker to the USB port on the Raspberry Pi, on the port that's not being used by the camera.

-

2Configure your components

In the Viam app, create a new robot and give it a name like Creepsy. Follow the instructions on the Setup tab to installviam-serveron your Raspberry Pi and connect to your robot.Navigate to the Config tab of your robot’s page in the Viam app. Click on the Components subtab.

- Configure the board

Add a board component to represent the Raspberry Pi:Click the Create component button in the lower-left corner of the page. Select type

boardand modelpi. Enterlocalas the name, then click Create.

You can name your board whatever you want as long as you refer to it by the same name in your code.

- Configure the motors

Add your right motor:Click Create component in the lower-left corner of the page. Select type

motor, then select modelgpio. EnterrightMotoras the name, then click Create.After clicking Create, a panel will pop up with empty sections for Attributes, Component Pin Assignment, and other information.

![Alt text: rightMotor component panel with empty sections for Attributes, Component Pin Assignment, and other information.]()

In the Board drop-down within attributes, choose the name of the board

localto which the motor is wired. This will ensure that the board initializes before the motor when the robot boots up.Then set Max RPM to 100 and enable direction flip.

In the Component Pin Assignment section, toggle the type to In1/In2. In the drop downs for A/In1 and B/In2, choose

15 GPIO 22and16 GPIO 23corresponding to the right motor wiring. Leave PWM (pulse-width modulation) pin blank, because this specific motor driver’s configuration does not require a separate PWM pin.![Motor component configured in the Viam app, the component tab is named rightMotor, with a type attribute motor and model attribute gpio. It has the attributes as of the board as local, encoder as non-encoded, max rpm as 1000, component pin assignment type as In1/In2, enable pins as neither, a/In1 as 15 GPIO 22, b/In2 as 16 GPIO 23, pwm as blank.]()

Now let’s add the left motor which is similar to the right motor. Add your left motor with the name “leftMotor”, type

motor, and modelgpio. Selectlocalfrom the Board drop-down, set Max RPM to100, and configure the motors pins as A/In1 and B/In2 corresponding to12 GPIO 18and11 GPIO 17respectively (according to the wiring diagram), and leave PWM blank. - Configure the base

Next, add a base component, which describes the geometry of your chassis and wheels so the software can calculate how to steer the rover in a coordinated way:Click Create component. Select

basefor type andwheeledfor model. Name your basecreepsy-base, then click Create.In the Right Motors drop-down, select

rightMotor, and in the Left Motors drop-down selectleftMotor. Enter250for Wheel Circumference (mm) and400for Width (mm). The width describes the distance between the midpoints of the wheels. Addlocal,rightMotor, andleftMotorto the Depends on field.![creepsy-base component panel filled with attributes right motors as rightMotor, left motors as leftMotor, wheel circumference as 250, and width as 400. It depends on local, rightMotor, and leftMotor. creepsy-base component panel filled with attributes right motors as rightMotor, left motors as leftMotor, wheel circumference as 250, and width as 400. It depends on local, rightMotor, and leftMotor.]()

- Configure the camera

Add the camera component:Click Create component. Select type

cameraand modelwebcam. Name itcamand click Create.In the configuration panel, click the video path field. If your robot is connected to the Viam app, you will see a drop-down populated with available camera names.

Select the camera you want to use. If you are unsure which camera to select, select one, save the configuration, and go to the Control tab to confirm you can see the expected video stream.

![cam component panel with type camera and model webcam, and the usb camera selected as the video path.]()

Then make it depend on

localso it initializes after the board component. - Configure the ultrasonic sensors

Add a sensor component:

Click Create component. Select type

sensorand modelultrasonic. Name your sensorultrasonic, then click Create.Then fill in the attributes: enter

38forecho_interrupt_pinand40fortrigger_pin, according to the wiring diagram. Enterlocalforboard.![Ultrasonic component panel with Attributes trigger_pin as 40, echo_interrupt_pin as 38, and board as local. Ultrasonic component panel with Attributes trigger_pin as 40, echo_interrupt_pin as 38, and board as local.]()

You have to configure the other ultrasonic sensor. For each of the additional ultrasonic sensors, create a new component with a unique name likeultrasonic2(where “2” indicates it’s the second sensor), typesensor, and modelultrasonic. In the attributes textbox, fill in thetrigger_pinandecho_interrupt_pincorresponding to the pins your ultrasonic sensors are connected to.While this tutorial and associated code demonstrate using 2 ultrasonic sensors, you can change the amount based on your preference.

- Configure the board

-

3Test your components

With the components configured, navigate to the Control tab. On the control tab, you will see panels for each of your configured components.- Motors

Click on both motor panels and check that they run as expected by clicking RUN.

![Left and right motor panels in the Control tab.]()

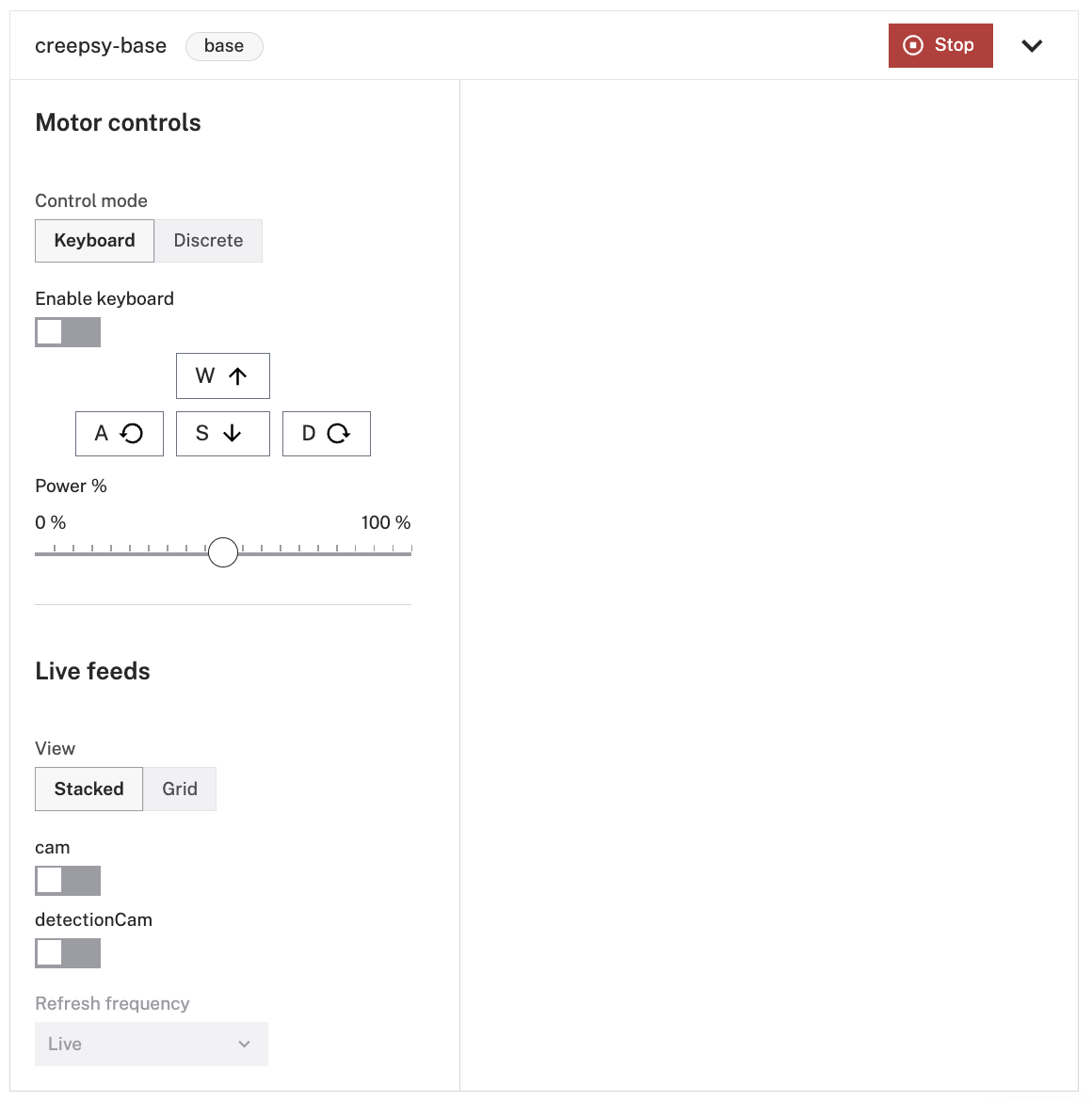

- Base

Click on the base panel and enable the keyboard. Then move your rover base around by pressing A, S, W, and D on your keyboard.You can also adjust the power level to your preference

![creepsy-base component in the Control tab, with Motor Control buttons to drive it around and change the power percentage. creepsy-base component in the Control tab, with Motor Control buttons to drive it around and change the power percentage.]()

- Camera

To see your camera working, click on the camera panel and toggle the camera on.![cam component panel in the Control tab, cam toggled on and the live stream showing a person.]()

- Ultrasonic Sensors

Click on your sensors panel and test that you can get readings from all of them.Click Get Readings to get the distance reading of the sensor.

![Ultrasonic component panel in the Control tab, sensor distance reading is 12.0770.]()

- Motors

-

4Configure your services

This tutorial uses pre-trained ML packages. If you want to train your own, you can train a model.To use the provided Machine Learning model, copy the effdet0.tflite file and the labels.txt to your Raspberry Pi using the commands below:

scp effdet0.tflite creepsy@creepsy.local:/home/creepsy/effdet0.tflite scp labels.txt creepsy@creepsy.local:/home/creepsy/labels.txt

Click on the Services subtab.

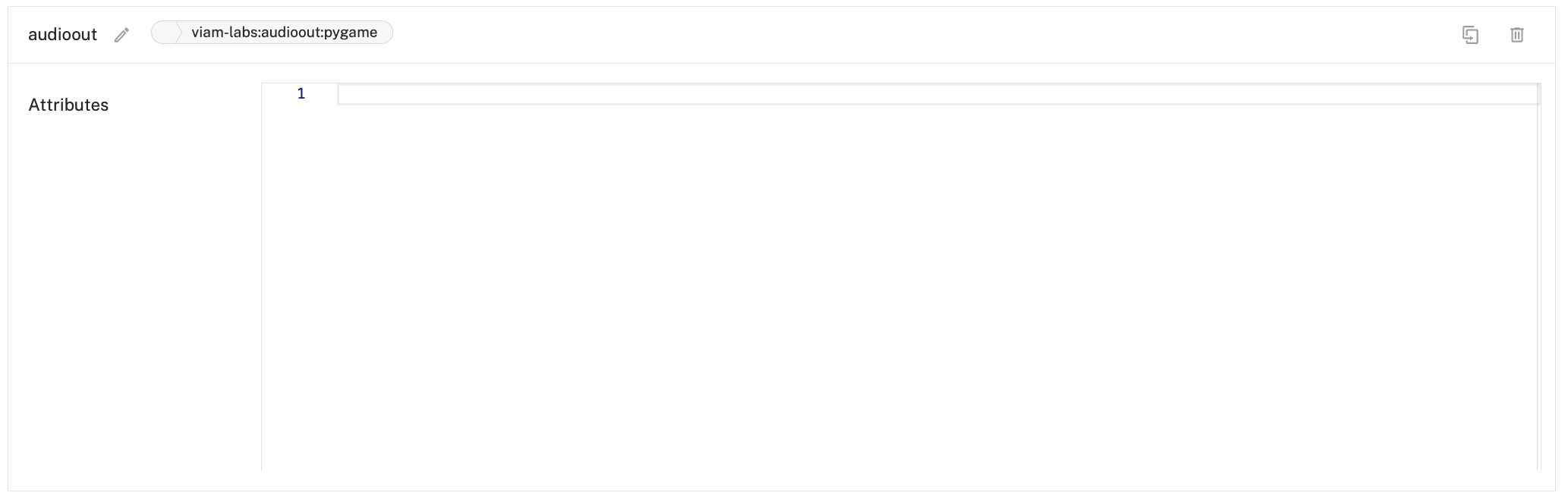

- Configure the "audioout" custom resource

Add the "audioout" custom resource from the repository to play audio files through the speaker:

It does not require any configuration in the Viam app.![]()

![]()

- Configure the ML model service

Add an mlmodel service:Click Create service in the lower-left corner of the page. Select type

ML Modeland modelTFLite CPU. Enterpeoplefor the name of your service, then click Create.In the new ML Model service panel, configure your service.

![mlmodel service panel with empty sections for Model Path, and Optional Settings such as Label Path and Number of threads.]()

Select the Path to existing model on robot for the Deployment field.

Then specify the absolute Model path as /home/creepsy/effdet0.tflite and any Optional settings such as the absolute Label path as /home/creepsy/labels.txt and the Number of threads as 1.![mlmodel service panel, Deployment selected as Path to Existing Model On Robot, Model Path filled as /home/creepsy/effdet0.tflite and Label Path filled as /home/creepsy/labels.txt, Number of threads is 1. mlmodel service panel, Deployment selected as Path to Existing Model On Robot, Model Path filled as /home/creepsy/effdet0.tflite and Label Path filled as /home/creepsy/labels.txt, Number of threads is 1.]()

- Configure an ML model detector

Add a vision service detector:Click Create service in the lower-left corner of the page. Select type

Vision, then select modelmlmodel. EntermyPeopleDetectoras the name, then click Create.In the new vision service panel, configure your service.

Select

peoplefrom the ML Model drop-down.![vision service panel called myPeopleDetector with filled-in Attributes section. ML Model name is “people”.]()

- Configure the detection camera

To be able to test that the vision service is working, add a transform camera which will add bounding boxes and labels around the objects the service detects.Click on the Components subtab, then click Create component in the lower-left corner of the page. Select type

camera, then select modeltransform. EnterdetectionCamas the name, then click Create.![detectionCam component panel with type camera and model transform, Attributes section has source and pipeline but they are empty.]()

In the new transform camera panel, replace the attributes JSON object with the following object which specifies the camera source that the

transformcamera will be using and defines a pipeline that adds the definedmyPeopleDetector:{ "pipeline": [ { "attributes": { "confidence_threshold": 0.5, "detector_name": "myPeopleDetector" }, "type": "detections" } ], "source": "cam" }Click Save config in the bottom left corner of the screen.

Your configuration should now resemble the following:

![detectionCam component panel with type camera and model transform, Attributes section filled with source and pipeline information.]()

- Configure the "audioout" custom resource

-

5Test your detection camera

Now you can test if the detections work. Navigate to the Control tab and click on thedetectionCampanel. Toggle the camera on to start the video stream.![detectionCam component panel in the Control tab, DetectionCam toggled on and a person is in front of the frame with a red detection box around her saying Person: 0.63]()

You can also see your physical camera stream and detection camera stream together on the base panel.

![creepsy-base component panel in the Control tab, with two camera streams on the right showing the same person, the bottom with a red detection box around him saying Person: 0.59 creepsy-base component panel in the Control tab, with two camera streams on the right showing the same person, the bottom with a red detection box around him saying Person: 0.59]()

At this point, it is a simple detection camera: it will detect any object in the

label.txtfile. Since we only have a person label in the file, it will only detect people. -

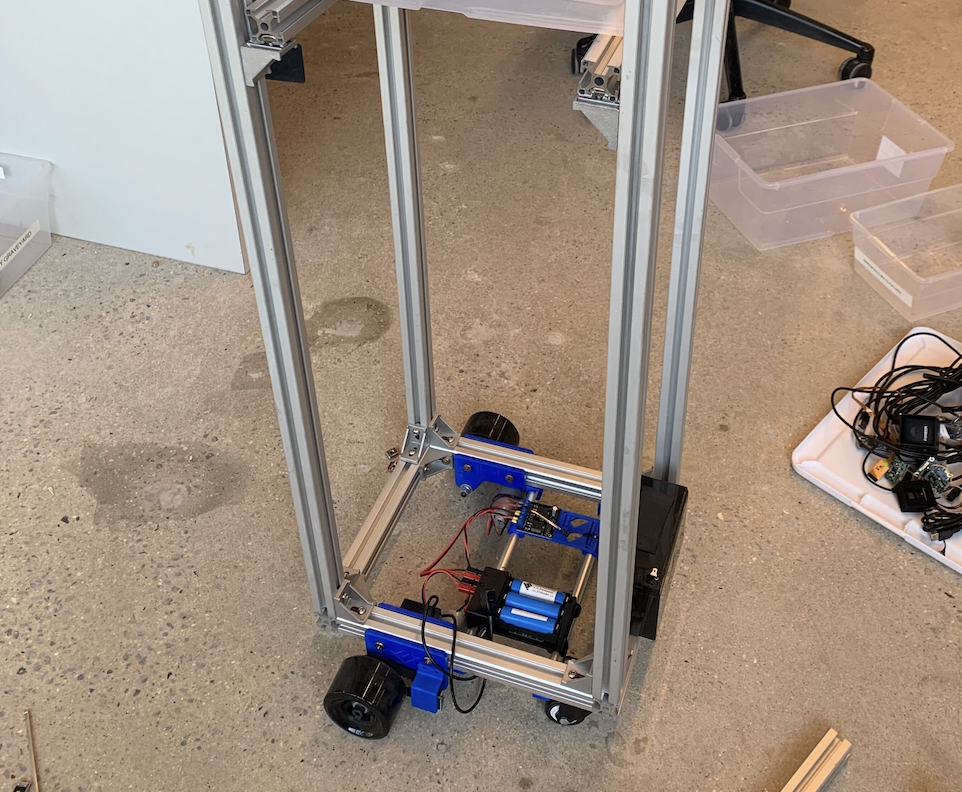

6Design your robot

Now that you have all your components wired, configured, and tested, you can assemble your robot.

Add four 3’ long (or taller if you want the ghost to be bigger) T-slotted framing rails along the corners of the SCUTTLE Rover base to make it a tall structure. Then add two 12-inch long T-slotted framing rails in the middle of the structure at the height that you want to hold the box. Secure them using T-slotted framing structural brackets.![four T-slotted framing rails connected to the Scuttle base with brackets for height, and two T-slotted framing rails connected to underneath the box with brackets for holding the circular head shape four T-slotted framing rails connected to the Scuttle base with brackets for height, and two T-slotted framing rails connected to underneath the box with brackets for holding the circular head shape]()

Next, add the wired Raspberry Pi, motor driver, and 12V battery/or Scuttle batteries to the base.

You can use the 3D-printed holders that come with the assembled SCUTTLE base for the Raspberry Pi, batteries, and motor driver. You can also print holders based on SCUTTLE designs from GrabCAD.

Secure the buck converter with hook-and-loop tape, double-sided tape, or a 3D-printed holder.

![motor driver secured to the robot base with 3D printed attachment and wired to the Raspberry Pi.]()

For ultrasonic sensors to fit the framing, I recommend 3D printing enclosures. This step is optional but makes the project look more aesthetically pleasing and ensures that the sensors don’t fall out as your robot moves around.

You can design your own enclosure, or you can use the same design I used:

![3D printed enclosure of the ultrasonic sensor.]()

The STL files we used can be found in the project repository. (SCUTTLE has a design for a 3D-printed enclosure with a twist bracket that fits the rails.)

If you decide not to use a 3D printer, you can tape the ultrasonic sensors to the rails. We recommend that you do so within the enclosure, one under the top rails (this will be the eye in the design) and one under the rover base, so they don’t touch people or obstacles as the robot moves around, as this could cause them to fall off or get damaged. One on top and one on bottom will also allow for more height coverage when it comes to obstacle avoidance.

![A finger pointing to the placement of where the ultrasonic sensor would live within the rover base.]()

Add the USB speaker to the top rail, I used zip ties to tie it securely.

Use hook-and-loop fasteners or something else to secure the USB camera to the top rails facing the front, in the design this will be the mouth.

Now we are ready to make Creepsy look scary! Add a circular shape to the top so it can look like a head. Then add a white sheet over it to make it look like a ghost.

Now cut the eye holes, one for the speaker and one for the top ultrasonic sensor.

-

7Add the robot logic

Download the full code onto your computer.Let’s take a look at what it does. First, the code imports the required libraries:

import asyncio import os from audioout_python import Audioout from viam.robot.client import RobotClient from viam.rpc.dial import Credentials, DialOptions from viam.components.sensor import Sensor from viam.components.base import Base from viam.services.vision import VisionClientThen it connects to our robot using a robot location secret and address. Replace these values with your robot’s own location secret and address, which you can obtain from the Code sample tab

robot_secret = os.getenv('ROBOT_SECRET') or '' robot_address = os.getenv('ROBOT_ADDRESS') or '' #change this if you named your base differently in your robot configuration base_name = os.getenv('ROBOT_BASE') or 'creepsy-base' #change this if you named your camera differently in your robot configuration camera_name = os.getenv('ROBOT_CAMERA') or 'cam' # change this if you named your sensors differently in your robot configuration sensor_names = (os.getenv("ROBOT_SENSORS") or "ultrasonic,ultrasonic2").split(",") # change this if you named your detector differently in your robot configuration detector_name = os.getenv('DETECTOR_NAME') or 'myPeopleDetector' # change this if you named your audioout service differently in your robot configuration audioout_name = os.getenv('AUDIOOUT_NAME') or 'audioout' pause_interval = os.getenv('PAUSE_INTERVAL') or 3 chase_label = os.getenv('CHASE_LABEL') or 'person'LOCATION SECRET

By default, the sample code does not include your robot location secret. We strongly recommend that you add your location secret as an environment variable and import this variable into your development environment as needed.

To show your robot’s location secret in the sample code, toggle Include secret on the Code sample tab. You can also see your location secret on the locations page.

Do not share your location secret, part secret, or robot address publicly. Sharing this information could compromise your system security by allowing unauthorized access to your robot, or to the computer running your robot.

Next, the code defines functions to play and stop the sounds. The first method,play_sound(), starts to play the sound. The second method,stop_sound(), stops the sound from playing.async def play_sound(ao, filename, loop_count, block): print("starting play sound " + filename) await ao.play("sounds/" + filename, loop_count, 0, 0, block) print("done play sound " + filename) async def stop_sound(ao): print("stopping sound") await ao.stop() print("done stopping sound")Next, the code defines functions for obstacle detection. The first method,

obstacle_detect(), gets readings from a sensor, which is used by the second method,gather_obstacle_readings(), to gather all the distance readings from a list of sensors. Lastly, the third method,obstacle_detect_loop(), uses an infinite loop to periodically check the readings to stop the base if it’s closer than a certain distance from an obstacle:async def obstacle_detect(sensor: Sensor): reading = (await sensor.get_readings())["distance"] return reading async def gather_obstacle_readings(sensors: list[Sensor]): return await asyncio.gather(*[obstacle_detect(sensor) for sensor in sensors]) async def obstacle_detect_loop(sensors: list[Sensor], base: Base): while True: distances = await gather_obstacle_readings(sensors) if any(distance < 0.4 for distance in distances): # stop the base if moving straight if base_state == "straight": await base.stop() print("obstacle in front") await asyncio.sleep(0.01)Then, we define a person detection loop, where the robot is constantly looking for a person, and if it finds the person, it goes toward them as long as there are no obstacles in front. It defines an action called 'chase', and starts playing a scary sound.

If it doesn’t find a person, it will continue looking by rotating the robot base 45 degrees at a time and looking again.It checks specifically for detections with the label

Personin thelabels.txtfile:async def person_detect(detector: VisionClient, sensors: list[Sensor], base: Base, ao): while True: action = "" global base_state # look for a person print("will detect") detections = await detector.get_detections_from_camera(camera_name,timeout=10) print("got detections") for d in detections: if d.confidence > .7: print(d.class_name) if (d.class_name == "Person"): action = "chase" if (action == "chase"): await stop_sound(ao) await play_sound(ao, 'possessed-laughter.mp3', 10, False) print("I have to chase the person") # first manually call obstacle_detect - don't even start moving if something is in the way distances = await gather_obstacle_readings(sensors) if all(distance > 0.4 for distance in distances): print("will move straight") base_state = "straight" await base.move_straight(distance=800, velocity=250) base_state = "stopped" else: await stop_sound(ao) await play_sound(ao, 'spooky-thunder.mp3', 0, False) print("I will turn and look for a person") base_state = "spinning" await base.spin(45, 45) base_state = "stopped" print("I will sleep") await asyncio.sleep(pause_interval)Finally, the

main()function initializes the base, the sensors, the detector, and ao (audio output). It also creates two background tasks running asynchronously, one looking for obstacles and avoiding them (obstacle_task), and one looking for people and moving towards them (person_task):async def main(): robot = await connect() base = Base.from_robot(robot, base_name) sensors = [Sensor.from_robot(robot, sensor_name) for sensor_name in sensor_names] detector = VisionClient.from_robot(robot, detector_name) ao = Audioout.from_robot(robot, audioout_name) await stop_sound(ao) await play_sound(ao, 'scream.mp3', 0, True) # create a background task that looks for obstacles and stops the base if its moving obstacle_task = asyncio.create_task(obstacle_detect_loop(sensors, base)) # create a background task that looks for a person and moves towards them, or turns and keeps looking person_task = asyncio.create_task(person_detect(detector, sensors, base, ao)) results= await asyncio.gather(obstacle_task, person_task, return_exceptions=True) print(results) await robot.close()When you run the code, your robot should start moving and playing sounds as it searches for people to scare!

-

8Summary

In this tutorial, you learned how to make your own scary human-chasing robot. You no longer have to try to jump-scare people at your Halloween party.With its people detection and obstacle avoidance technology, convenient autonomous operation, and modern design, it’s sure to impress (and scare) all your guests.

To make Creepsy even more advanced, you can try to:

- Add more ultrasonic sensors so it doesn’t hit objects at different heights, you can also attach them to a moving gantry along the side rails

- Add a depth camera to detect obstacles and how close they are to Creepsy

- Add an IMU to see if Creepsy is tipping backward

- Add a lidar

- Add LED lights to make it more fun

- Record your own scary sounds and add it to your robot

Creepsy: Scary Autonomous Human Following Robot

Creepsy, with sensors and spooky sounds, navigates obstacles, identifies guests, and moves towards to scare them at spooktacular gatherings.

Hazal Mestci

Hazal Mestci

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.